Computers Watching Movies

Ben Grosser’s work is all about how software permeates and affects much of our lives. As we begin to realize the extent to which we rely on algorithms for major societal functions such as search engine results, stock trading, mass surveillance, and more, our focus remains on what the machines are do

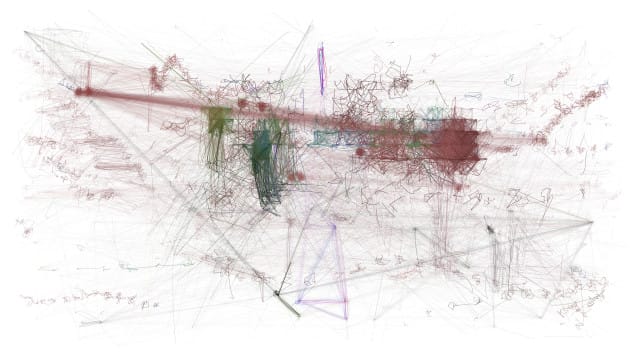

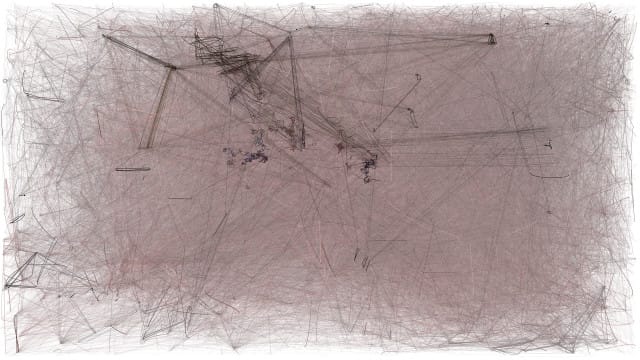

Ben Grosser’s work is all about how software permeates and affects much of our lives. As we begin to realize the extent to which we rely on algorithms for major societal functions such as search engine results, stock trading, mass surveillance, and more, our focus remains on what the machines are doing for us humans. “Computers Watching Movies” (2013) reverses that idea, offering a glimpse into how an algorithm sees the human world, for it’s own sake. Grosser designed a program to watch popular movies, and describe it’s act of viewing. The resulting video is a live-documentation of the machine vision of Grosser’s design.

While big explosions and beautiful women are the biggest draws to our society’s eyes, Grosser’s algorithm has a different set of interests and motives. The algorithms eschew content over very precise movements, colors, and activities, possibly impossible for the human eye to detect. However, the algorithmic objectivity of machine vision is a fallacy; Grosser has undeniably embedded his own desires, skills, and interests into “Computers Watching Movies.” I asked Grosser some questions over email to better understand his work.

* * *

Ben Valentine: What prompted this project?

Ben Grosser: With all of the machine vision and New Aesthetic discussions going on over the last couple years, I started to notice that portrayals of computer vision are typically based on what they do for humans, such as face identification for automated surveillance or car tracking for traffic analysis. That got me wondering what computer vision would look like if it wasn’t so instrumental, but instead showed the computer watching something for its own purposes instead of ours. What would computers see if they watched the movies?

As with many new works, this one also builds on older ones. In particular, part of my work on “Speed of Reality” was to engage in hyper-analysis of reality TV editing styles (so I could later turn what I saw into an algorithm to drive multiple computer-controlled cameras). With this analysis having come before Computers Watching Movies, I was already attuned to trying to understand why *I* watch what I watch during a show, and thinking about how that relates to machine vision.

Further, questions about machine vision — as part of a broader drive to understand the cultural, social, and political implications of software — have been a recurring theme in my work over the last few years.

BV: Can you describe how the algorithm is watching the movies?

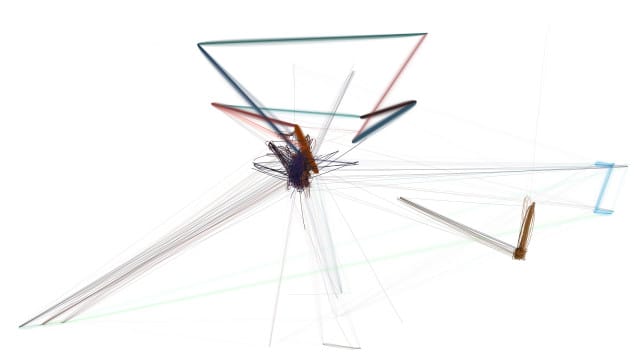

BG: The software I wrote uses computer vision algorithms to “watch” each movie clip for areas of prominence, patterns, colors, etc, and uses those algorithms to identify and track items of interest. These items might be faces or buildings or signs or landscape elements. Whatever it is, how the software decides what to focus on is driven by simple intelligence algorithms—namely stochastic processes—that give the software some degree of agency. When it chooses something to “look” at, it represents that act of looking through an interactive temporal “sketching” process. In other words, the system draws in response to what it sees.

BV: Alexis Llyod, Creative Director of R&D at the New York Times, recently wrote about the importance of humans staying aware of these algorithms. As a society we are becoming increasingly reliant on algorithmic processes which most of us hardly understand. Is it important to make laypeople aware of how computational mechanisms work? What about machine vision can be learned from this piece?

BG: This is a great question, and one I think about a lot.

Software is now involved in nearly every aspect of our daily lives. It runs our banks, our phones, our cars, our refrigerators. Software tells us how to get somewhere, answers our questions, and suggests which film or book we might like next. Yet most people not only don’t know how these systems work or why they do what they do, but they presume that software systems are neutral actors. This is a problem because software is not neutral—software comes with built-in biases from those who develop it, those who run the corporations who employ software developers, and increasingly, I would argue, its biases come from software itself.

As we continue to develop software systems with more and more agency via artificial intelligence, how is that software evolving to do things for its own purposes? When law enforcement builds a vision system to watch for criminal activity, does that system “want” to find it? When the NSA writes algorithms to capture and search all communications for terrorist activity, will that system prefer to identify increasing amounts of correspondence as suspect? In other words, are these systems developing computational agency? At the very least, these algorithms are prescribing certain kinds of behaviors and ways of thinking.

Many of my works explore these types of questions, from my “Interactive Robotic Painting Machine” (what does it mean for human creativity when a computational system paints its own artworks?), to “Facebook Demetricator” (how does an interface that foregrounds our friend count change our conceptions of friendship?), to “ScareMail” (what happens with NSA search systems when you give them more of what they already want?).

“Computers Watching Movies” asks us to consider how computer vision differs from human vision, and what that difference reveals about our culturally-developed ways of looking? Why do we watch what we watch when we watch it? Will a system without our sense of narrative or historical patterns of vision watch the same things?

As a specific point of comparison between human and machine vision, I think the results from Inception most clearly illustrate how we see things differently than machines. The computer sees the explosions halfway through that clip as a series of small rapid changes, so many that its representation of those changes obliterates the drawings it created before that point. But when watching that clip myself, I watch mostly the origins of the explosions and as much as anything else, focus on those aspects of the frame that aren’t moving. I’m left wondering why I and the computer see things so differently? And what will this difference mean when we give more and more of our vision-related tasks over to computation (such as the latest NSA revelation regarding facial recognition)?