Listening Is the New Performing

The Whitney Museum of American Art made a particularly savvy choice by teaming up with Issue Project Room to present David Rosenboom’s Propositional Music, a three-day concert series spanning 50 years of his extraordinary compositions.

The Whitney Museum of American Art, happily ensconced in its new digs on Gansevoort Street, made a particularly savvy choice by teaming up with Issue Project Room to present David Rosenboom’s Propositional Music — a three-day concert series spanning 50 years of his extraordinary compositions — inside the museum’s intimate, third-floor theater. The museum also threw down the gauntlet in terms of human computer interfaces, especially with what is referred to as BCMI, or Brain Computer Music Interfacing. Though it is not a new field, it is unfamiliar to most museum visitors.

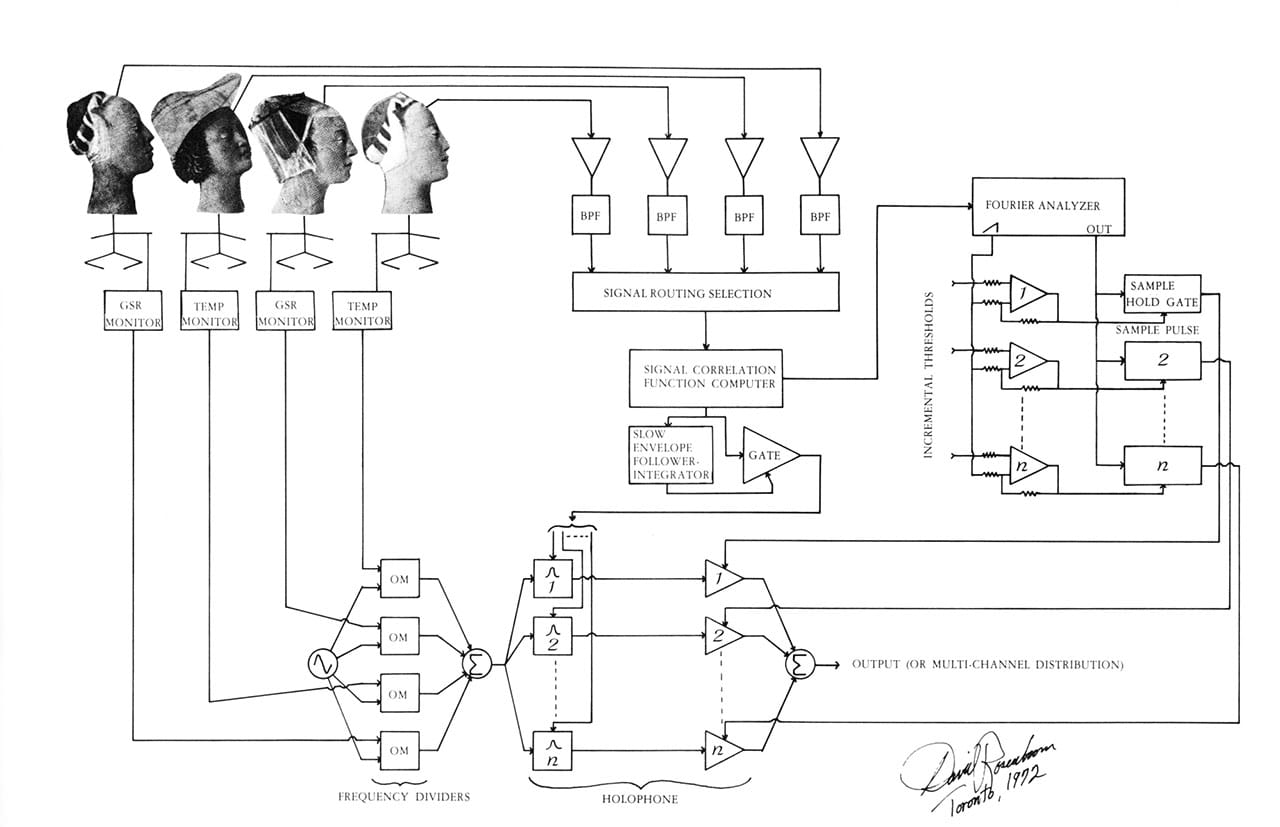

Rosenboom, an iconic figure in the world of experimental music, is part of a triumvirate together with Richard Teitelbaum and Alvin Lucier, who in the late 1960s and 1970s were so truly avant-garde that among other things they developed “brainwave music,” a specific form translating electroencephalogram (EEG) signals into sound (though you could throw Nam June Paik and John Cage in that mix for good measure). Rosenboom’s contributions started as early as 1972 with “Portable Gold and Philosophers Stones,” using a circuit that processed not only brain waves, but the body temperature and skin responses of four performers.

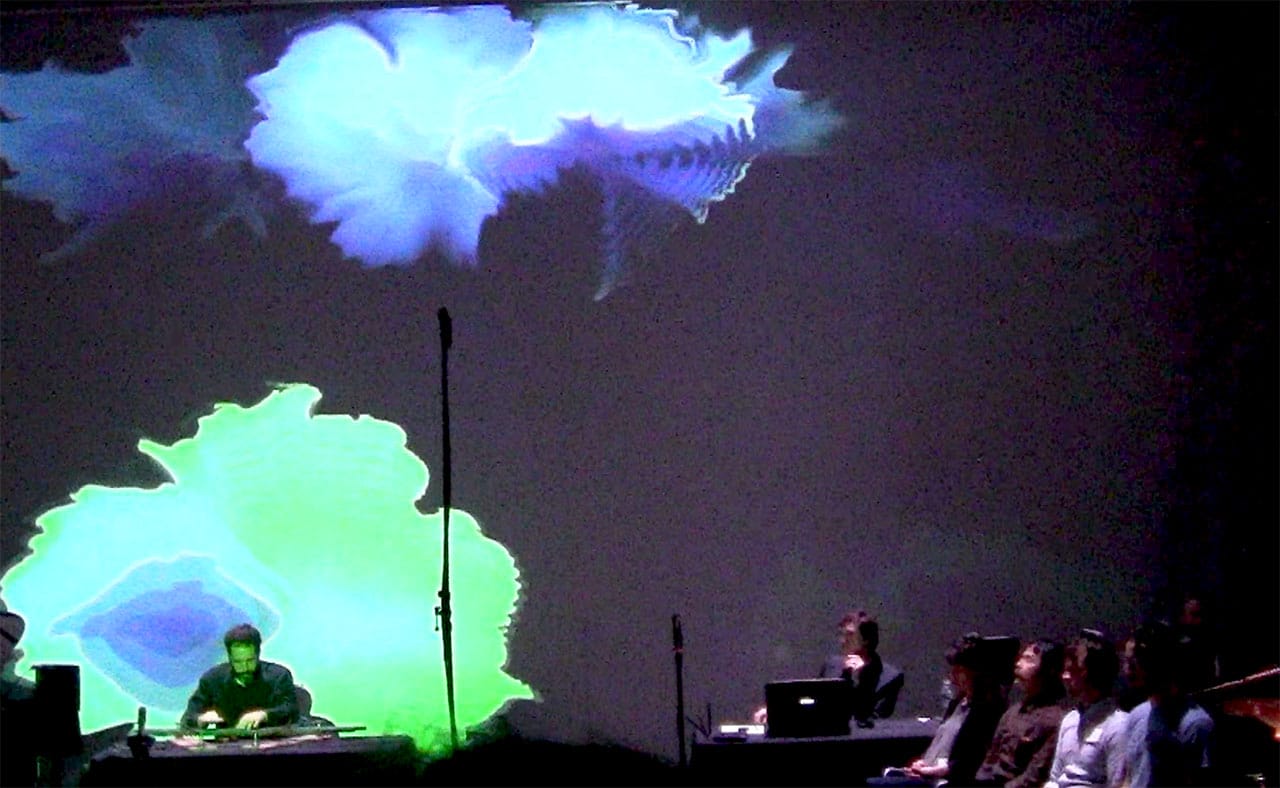

Rosenboom presented his 2014 piece “Ringing Mind—Collective Brain Responses Interacting in a Spontaneous Musical Landscape,” made in collaboration with computational neuroscientists and musician Tim Mullen and cognitive scientist and performer-composer Alex Khalil. Four volunteers were fitted with custom-made Cognionics EEG headsets that Rosenboom said resembled “the feet of a spider.” Using a technique he called “hyperscanning,” the volunteers’ collective brain waves were merged into one big or “hyper brain.” Dominant resonant patterns or ERPs (Event Related Potentials) were selected by Rosenboom, and sonified into electronic sounds. Live music, including an electric violin and a lithoharp (a stone-like xylophone), was also played. As the collective brains responded to the live music, the resonator fields responded with a unique set of merged brain wave signals. These wave patterns, he said, were turned into generative projected fields “like sped up clouds” behind the performers, changing color according to the collective brain frequency.

Rosenboom explained, “The role of the EEG people is to create active listeners. Listening is composing — that is a creative act,” and then added, “listening is the performing act.” He said he lined up the performers in chairs on the right side of the performing space to “substitute for the brass section.” Even the special computational “extractor tools,” and the headsets were part of his composer’s palette, turning everyone in the room into part of the performing experience. The musical sounds ranged from squiggles, squirts, bells, wobbles, whistles, trills, pulled violin strings, and mysteriously played piano keys on a vacant piano, while colored explosions of purple, pale blue, indigo, and neon green reflected brainwave frequencies.

Rosenboom has been obsessed with this type of performance for over 40 years, long before there was adequate portable technology that could easily bring it inside a museum like the Whitney. Now that the technology has arrived, what will the next 40 years reveal?

“Ringing Mind — Collective Brain Responses Interacting in a Spontaneous Musical Landscape” by David Rosenboom took place on May 23 at the Whitney Museum of American Art (99 Ganesvoort Street, Meatpacking District, Manhattan).