Amazon Facial Recognition Falsely Links 27 Athletes to Mugshots in ACLU Study

Massachusetts's American Civil Liberties Union chapter revealed Amazon’s “Rekognition” technology falsely linked the faces of 27 professional athletes in New England to mugshots in a criminal database.

In the case of Amazon’s facial recognition software, “recognition” might be a misnomer.

The Massachusetts chapter of the American Civil Liberties Union (ACLU) announced this month that Amazon’s “Rekognition” technology falsely linked the faces of 27 professional athletes in New England to mugshots in a criminal database.

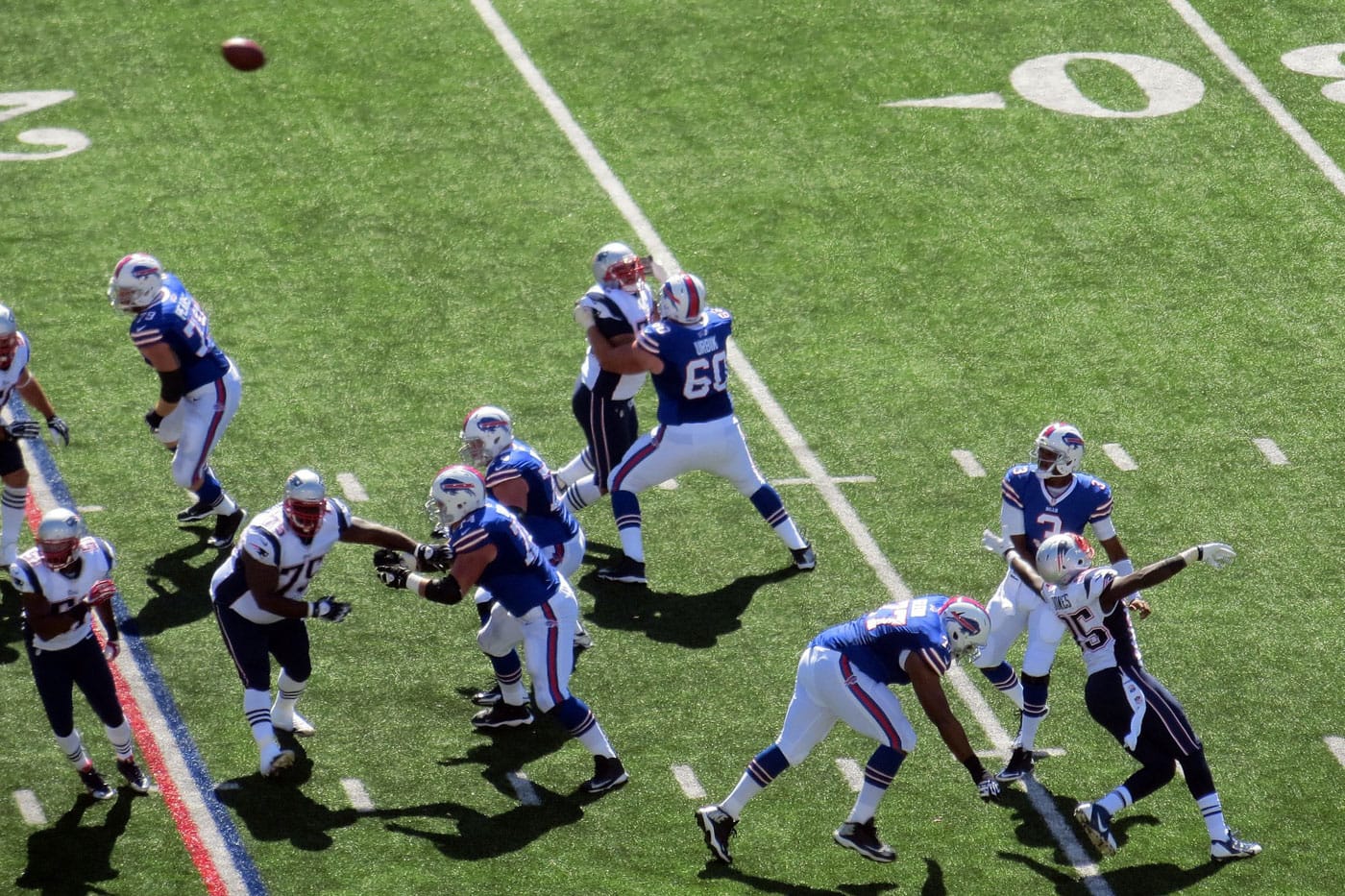

As part of a probe on face surveillance, the Massachusetts ACLU filtered 188 local athletes through a database of 20,000 mugshots. Among the misidentified were Patriots running back James White, Red Sox pitcher Chris Sale, and three-time Super Bowl winner and Patriots safety Duran Harmon. (Of the 28 misidentifications, 50% were Patriots players.)

“This technology is flawed,” Harmon told the ACLU. “If it misidentified me, my teammates, and other professional athletes in an experiment, imagine the real-life impact of false matches.”

As if on cue, this revelation comes just as Massachusetts considers legislation on the subject. A bill sponsored by the state’s chapter of the ACLU asks that tech companies honor a moratorium on facial recognition until proper guardrails — and a national precedent — are in place.

Roughly two years ago, Amazon peddled its tech to law enforcement, promising a speedy alternative to over-taxed and under-staffed agencies. The tech can recognize faces in pictures or video format and instantly run those faces against millions of images that span multiple databases.

Facial recognition — its limits, possibilities, and the potential for abuse — is virtually uncharted in modern law.

Amazon, arguably the leading force in consumer goods, is angling to set the standard. Just last month, Amazon’s CEO Jeff Bezos insinuated that his company is in the process of writing its own universal laws on facial recognition software.

“Our public policy team is actually working on facial recognition regulations; it makes a lot of sense to regulate that,” Bezos said during an Alexa gadget event.

In an email to Hyperallergic, an Amazon Web Services spokesperson asserted, “The ACLU is once again knowingly misusing and misrepresenting Amazon Rekognition to make headlines.”

“As [Amazon has] said many times in the past, when used with the recommended 99% confidence threshold and as one part of a human driven decision, facial recognition technology can be used for a long list of beneficial purposes, from assisting in the identification of criminals to helping find missing children to inhibiting human trafficking,” the spokesperson continued. “We continue to advocate for federal legislation of facial recognition technology to ensure responsible use, and we’ve shared our specific suggestions for this both privately with policy makers and on our blog.”

But officials are understandably reticent. To innovators and leaders in tech, facial recognition is a gleaming brass ring — ready for the snatching. But for advocates and lawyers, the technology, largely unregulated, is elusive and dangerous.

The ACLU’s report is not the first of its kind. Earlier this year, the Massachusetts Institute of Technology (MIT) conducted a study on bias and the tendency of facial recognition to over-simplify its targets. Women and people of color are particularly prone to misidentification by the software. The software is most accurate, the study found, when parsing images of white men.

“For darker-skinned women … the error rates were 20.8 percent, 34.5 percent, and 34.7. But with two of the systems, the error rates for the darkest-skinned women in the data set … were worse still: 46.5 percent and 46.8 percent. Essentially, for those women, the system might as well have been guessing gender at random,” the MIT news office wrote of the results.

Put simply, the implications are vast for civil liberties. The software allows law enforcement to secretly (and perhaps brazenly) investigate unsuspecting citizens — privacy be damned.

“Technology like Amazon’s Rekognition should be used if and only if it is imbued with American values like the right to privacy and equal protection,” Senator Edward J. Markey, a Massachusetts Democrat, told the New York Times. “I do not think that standard is currently being met.”

In 2018, the ACLU conducted a similar test and found that 28 members of Congress were matched with criminals. The majority of those false matches involved congresspeople of color.

“These [test] results demonstrate why Congress should join the ACLU in calling for a moratorium on law enforcement use of face surveillance,” the ACLU of Northern California said in a press release.