AI Art Is Soft Propaganda for the Global North

Unsurprisingly, the artistic and ethical shortcomings of AI image generators are tied to their dependence on capital and capitalism.

It’s 8:06am and I’m staring at images scrolling on my phone’s screen at a five-second rate: “Viking, castle, feast, hall”; “Banksi [sic], upscaled”; “A commercial aeroplane going towards a big time portal in round shape which shows like a time jump, high octane, realistic, hdr — wallpaper.” It’s my daily research time on so-called “AI art.” The images are being produced on the Discord server of Midjourney, an “AI image generator.” The latest trend in the ongoing AI race, these types of generators, including Dall-E by OpenAI and Stable Diffusion by StabilityAI, are algorithmic systems based on deep learning and probabilistic learning. They compound computational models for natural language processing (GPT-3 by OpenAI and its derivatives), computer vision, and image synthesis into Frankenstein-like systems that produce two-dimensional visual artifacts matching a user’s prompt. They are remarkably popular and, admittedly, an impressive technical feat. But, I wonder, beyond the facile aesthetic appeal, what do these models do at a cultural level?

As an artist and scholar working with open source technology since 2004 and with machine learning and AI since 2012, I’m as fascinated as I am weary of the creative potentials and cultural implications of machine learning. Deep learning and, by extension, AI generators are particularly problematic because their efficiency depends on the exclusive assets of a few extraordinarily wealthy agents in the industry. They have vast computational power, immense datasets, capital to invest in academic research, and capacities to train evergrowing models (Dall-E has 12 billion parameters and more are to be added). Open sourcing a model, as StabilityAI did with its own, may open up research to some extent but does not undermine the reliance of the whole project (development, maintenance, promotional campaign, investments, revenues) on the steady stream of money by its founder — a former hedge fund manager. Unsurprisingly, the artistic and ethical shortcomings of AI generators are tied to their dependence on capital and capitalism.

Contrary to popular opinion, these systems do not create images out of thin air but rather amalgamate abstract features of existing artworks into pastiches. Because of their mathematical nature, the way they create artifacts lacks basic intent and is driven, instead, by complicated probability approximations. Their functioning seems to be so obscure that David Holz, founder of Midjourney, stated: “It's not actually clear what is making the AI models work well [...] It's not clear what parts of the data are actually giving [the model] what abilities”.

Other things are clear enough, though. First is the exploitation of cultural capital. These models exploit enormous datasets of images scraped from the web without authors’ consent, and many of those images are original artworks by both dead and living artists. LAION5, an academic research database funded by StabilityAI and used to train its Stable Diffusion model, consists of 5.85 billion image-text pairs. LAION-Aesthetics, a subset of this database, contains a collection of 600 million images algorithmically selected for being “aesthetically pleasing images” — as if aesthetic pleasure were universal. A recent survey of a subset of the latter collection found that a large portion of the images are scraped from Pinterest (8.5%) and Wordpress-hosted websites (6.8%), while the rest originates from varied locations including artists-oriented platforms like DeviantArt, Flickr, Tumblr, as well as art shopping sites, including Fine Art America (5.8%), Shopify, Squarespace, and Etsy. Contemporary artists whose work is being exploited have been vocal about the problem and digital art platforms have started banning AI-generated content following pressures from their communities.

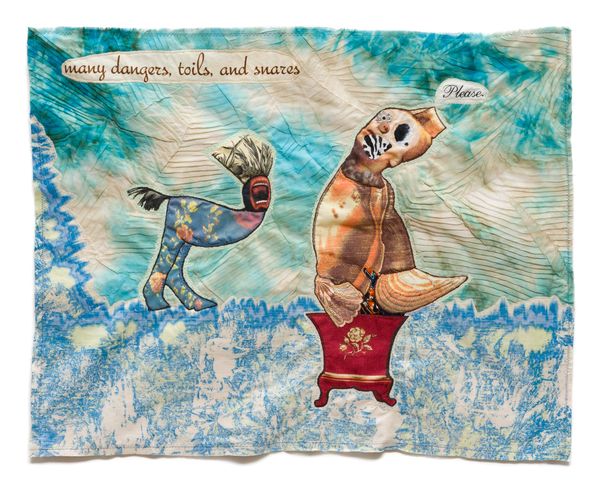

The second concern is the propagation of the idea that creativity can be isolated from embodiment, relations, and socio-cultural contexts so as to be statistically modeled. In fact, far from being “creative,” AI-generated images are probabilistic approximations of features of existing artworks. Figuratively speaking, AI image generators create a cartography of a dataset, where features of images and texts (in the form of mathematical abstractions) are distributed at particular locations according to probability calculations. The cartography is called a “manifold” and it contains all the image combinations that are possible with the data at hand. When a user prompts a generator, this navigates the manifold in order to find the location where the relevant sampling features lie. To understand this a bit better, albeit crudely, consider the following example, that I illustrate using Stable Diffusion: Multiple images of a dog by Francis Bacon are grouped at one location in the manifold; multiple images of a flower by Georgia O’Keefe are grouped at another location. But a point in the manifold exists where Bacon’s dogs and O’Keefe’s flowers meet. So, when prompted to generate “a dog by Francis Bacon in a flower by Georgia O’Keefe,” the model uses the text as directions to find that particular location where dogs and flowers live next to each other. Then it samples some of the visual features stored at this location and uses them to filter signal noise in the form of a coherent image (technically, Gaussian noise is used). The sampling of features is stochastic, meaning that the samples are randomly selected from the relevant data; this is why a model prompted with the same text will always generate a different result. It is clever, it works well, and you don’t need a PhD to see that such a process has very little to do with any kind of creativity, however you may define it.

But beyond the tired issue of creativity lies something more crucial. AI image generators would not deserve much criticism were they reliant on artists’ consent and marketed as software plug-ins. They are, after all, playful and accessible entry points into computational art and, if the dull homogeneity of their output is diversified, may even become useful tools for some artists. It is the claim to a new form of art by the industry’s public relations engine and the art market that is extremely problematic, especially when it is used to motivate hyperbolic claims of machines’ general intelligence. Such claims exploit culture and art to reinforce what I call an ideology of prediction, a belief that anything can be predicted and, by extension, controlled. Prediction ideology is the operating system of the Global North. Wealthy corporations and individuals are frenetically investing in deep and probabilistic learning research. Given that most of the Global North is structured around algorithmic systems (from welfare, justice, and employment to warfare, finance, and domestic and international policy), implementing deep learning at scale offers a potentially huge financial gain to those running the business. Yet, while deep learning has proven useful in specific cases, such as modeling of protein folding or biodiversity loss, its signature on society has been so far abysmal. Consider the role of Cambridge Analytica in the British Leave.EU campaign and Donald Trump’s election; the entanglement of Google and the US military in Project Maven, where Google’s machine learning library, TensorFlow, was used to enhance warfare drones and analyze surveillance data; the automated exploitation of labor from Amazon and Netflix to Uber, Spotify, and Airbnb; the ability of algorithmic trading to destabilize already volatile financial markets, as in the flash crash of 2010; and the daily psychological violence on children by Meta’s Instagram.

AI art is, in my view, soft propaganda for the ideology of prediction. As long as it remains tied to the paradigm and politics of ever-large models, increasing capital and marketing hyperbole, its contribution to art practice will have little meaning, if any. Where the ideology of prediction sees the future of art in a know-it-all model generating on-demand art, or in a creativity equalizer wrestling artistic intent out of stolen artworks, I rather see something else: unpredictable machine learning tools, artworks as outliers of trends, affirmative perversions of technology and grassroots development of creative instruments. This is a future already in the making, one only needs to look for those artists who are not keen to play the gamble of the hype cycle and rather dare imagine how to create unexpected technologies and risky artistic languages.