Humans Prefer Computer-Generated Paintings to Those at Art Basel

Computer scientists at Rutgers University developed a system to generate artworks that were deemed more communicative and inspiring than human-made art.

Some of the most fascinating research out there on machine learning as applied to art is being conducted by the enterprising researchers at Rutgers University’s Art and Artificial Intelligence Laboratory. Computer scientists there have previously developed algorithms to study artistic influence and to measure creativity in art history. Most recently, the lab’s team turned toward something a little different: it generated entirely new artworks using a new computational system that role plays as an artist, attempting to demonstrate creativity without any need for a human mind.

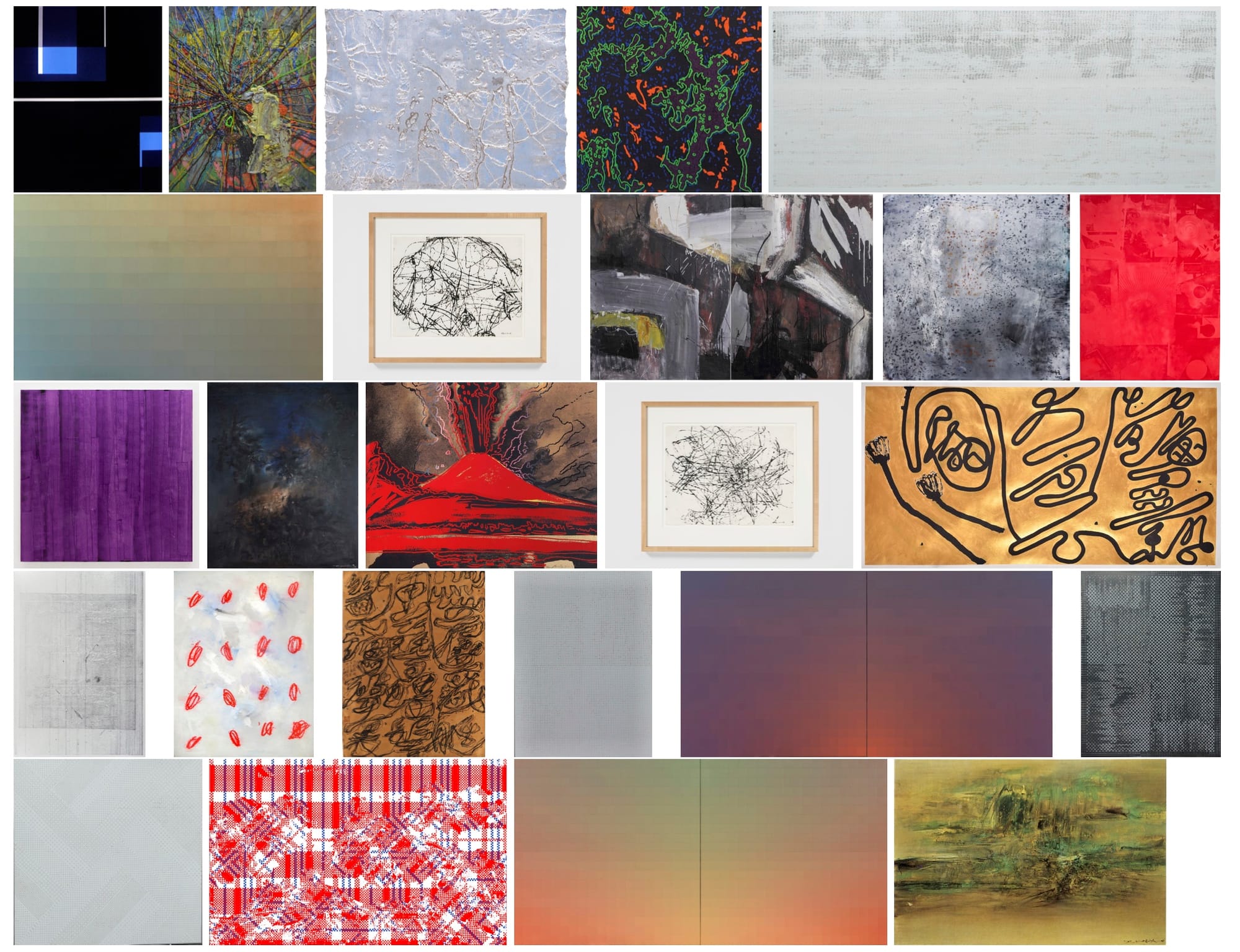

The results of this study were published last month in a paper penned by Ahmed Elgammal, Bingchen Liu, Mohamed Elhoseiny, and Marian Mazzone. To test their system, the researchers showed the generated artworks to a pool of 18 people to judge, mixed with 50 images of real paintings — half by famous Abstract Expressionists and half shown at Art Basel 2016, a fair that represents “the forefront of human creativity,” as Elgammal told Hyperallergic.

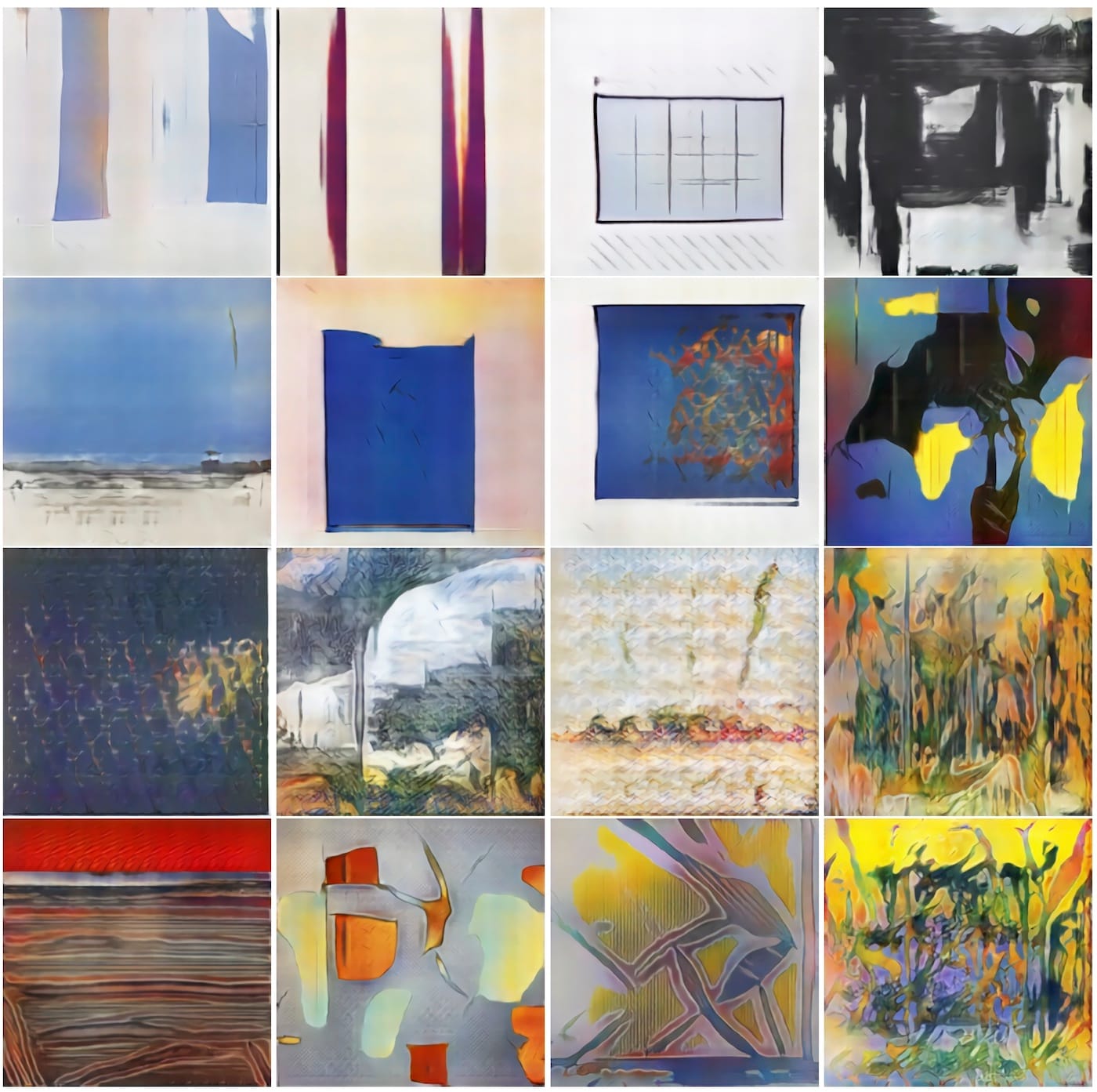

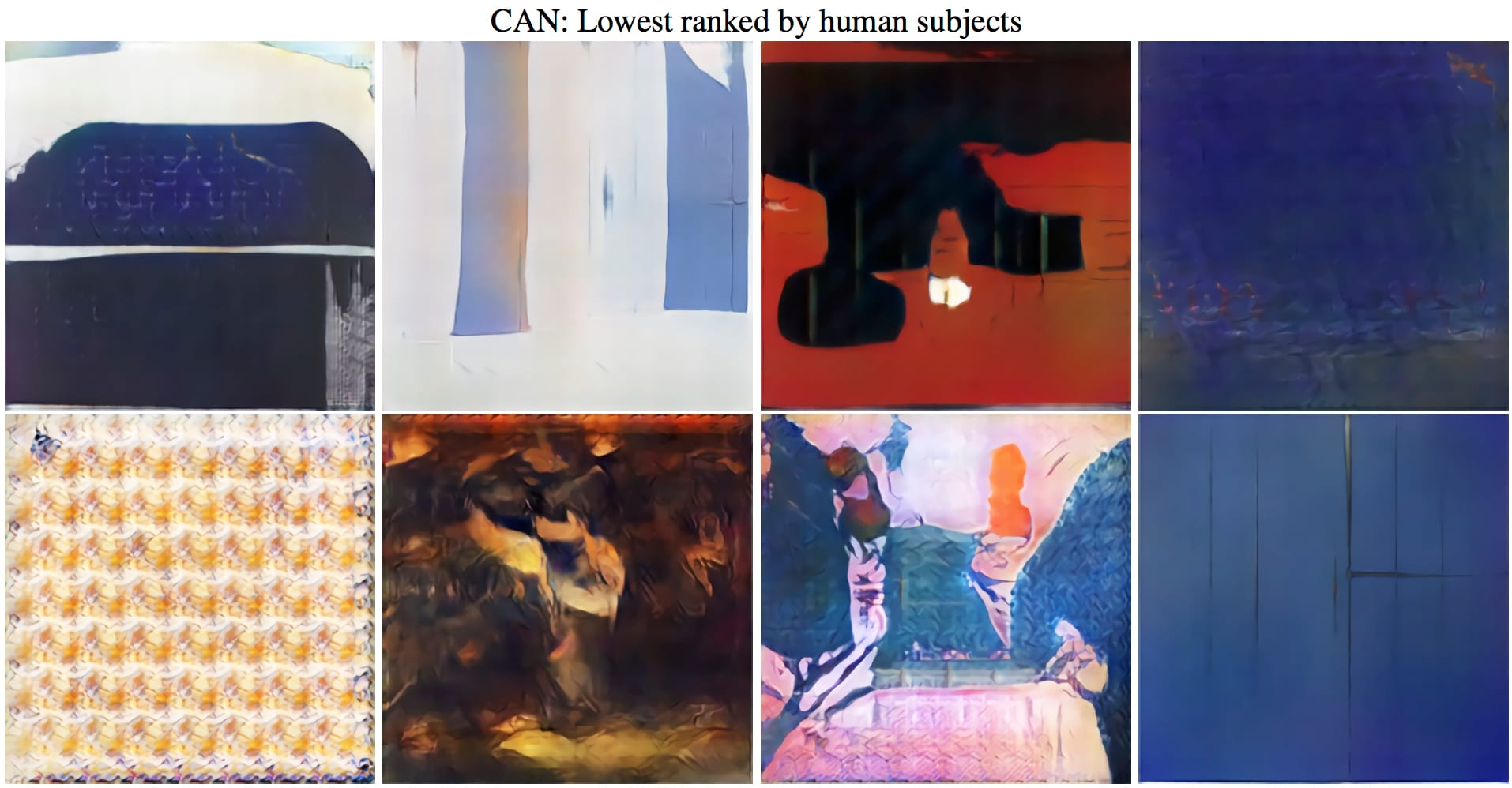

The results: participants largely preferred the machine-created artworks to those made by humans, and many even thought that the majority of works at Art Basel were generated by the programmed system. So, computers may be getting closer to autonomously producing their own art that people deem creative. Also, zombie formalism is real.

Although it creates its own images, the network, dubbed a Creative Adversarial Network (CAN), relies on creative human works during its learning process. Researchers programmed it to study 80,000 WikiArt images of Western paintings from the 15th to the 20th century so that it knew what kind of images have traditionally been aesthetically appealing. But the scientists didn’t want to devise a system that could merely emulate history paintings, genre scenes, landscapes, and portraits in established styles — a machine that truly has artificial intelligence, after all, must be creative. Once the system learned these styles, it then worked to deviate from them.

“The system has two interactive components: one that generates art and one that judges art,” Elgammal told Hyperallergic. “The judge is supposed to be trained on art and knows styles; the creator tries to create something that tests the taste of the judge so it will think the [generated work] is art but at the same time confuses the judge about what kind of art and style it is. By doing so, [the creator] tries to do something novel that doesn’t fit into established styles but is still aesthetically appealing.”

As we’ve previously seen with artificial neural networks, when generating systems stray too much from the norm, the results are simply creepy (hello, slimy creatures and mutant dogs/sloths!). The two components interact to maintain a balance between straying from recognized styles and going too deep into new, experimental territory that could garner negative criticism.

Researchers then tested whether or not these generated works could pass as creative to some people. An object, for their purposes, demonstrates creativity if it is both “novel and influential.” The first question they posed was whether humans could simply distinguish between the computer’s art and human-made artworks. As Elgammal sums up in a blog post, participants believed that the generated images were made by artists 75% of the time, compared to 85% of the time for the collection of Abstract Expressionist artworks, all made between 1945 and 2007. In terms of the Art Basel paintings, participants thought that humans had made them just 48% of the time. Selected at random and chosen for their lack of figuration and obvious brushstrokes, the art fair collection featured works by David Smith, Andy Warhol, Leonardo Drew, although the vast majority of the 25 paintings, interestingly, were produced by Chinese artists including Ma Kelu, Zao Wou-Ki, and Xu Zhenbang.

The researchers also asked their subjects to rate individual artworks based on whether they thought an image presented appealing visual structure, was inspiring, relayed an intent, and communicated a message. In general, the participants praised the generated images more than those made by real artists in both the Abstract Expressionism and the Art Basel sets.

“It might be debatable what a higher score in each of these scales actually means,” Elgammal concluded diplomatically. “However, the fact that subjects found the images generated by the machine intentional, visually structured, communicative, and inspiring, with similar, or even higher levels, compared to actual human art, indicates that subjects see these images as art!”

What’s more, not only are people deeming them art because of their appearance, but because of their potential market value. Since the study’s publication, Elgammal says he’s received messages from private collectors who expressed interest in purchasing the CAN-generated works as well as galleries interested in exhibiting them. He isn’t certain if the system will one day be deployed to create commissions, acknowledging that that would be “very controversial”; the team, however, is now trying to arrange for some initial sales of works to galleries as a way to raise funds for research at Rutgers.

The interest surprised him, but the reactions certainly make sense in this culture hungry to jump on the next cutting-edge, lucrative projects. As Elgammal put it, “It’s the first time that AI has generated art that really looks good.”