New Tool Helps Artists Protect Their Work From AI Scraping

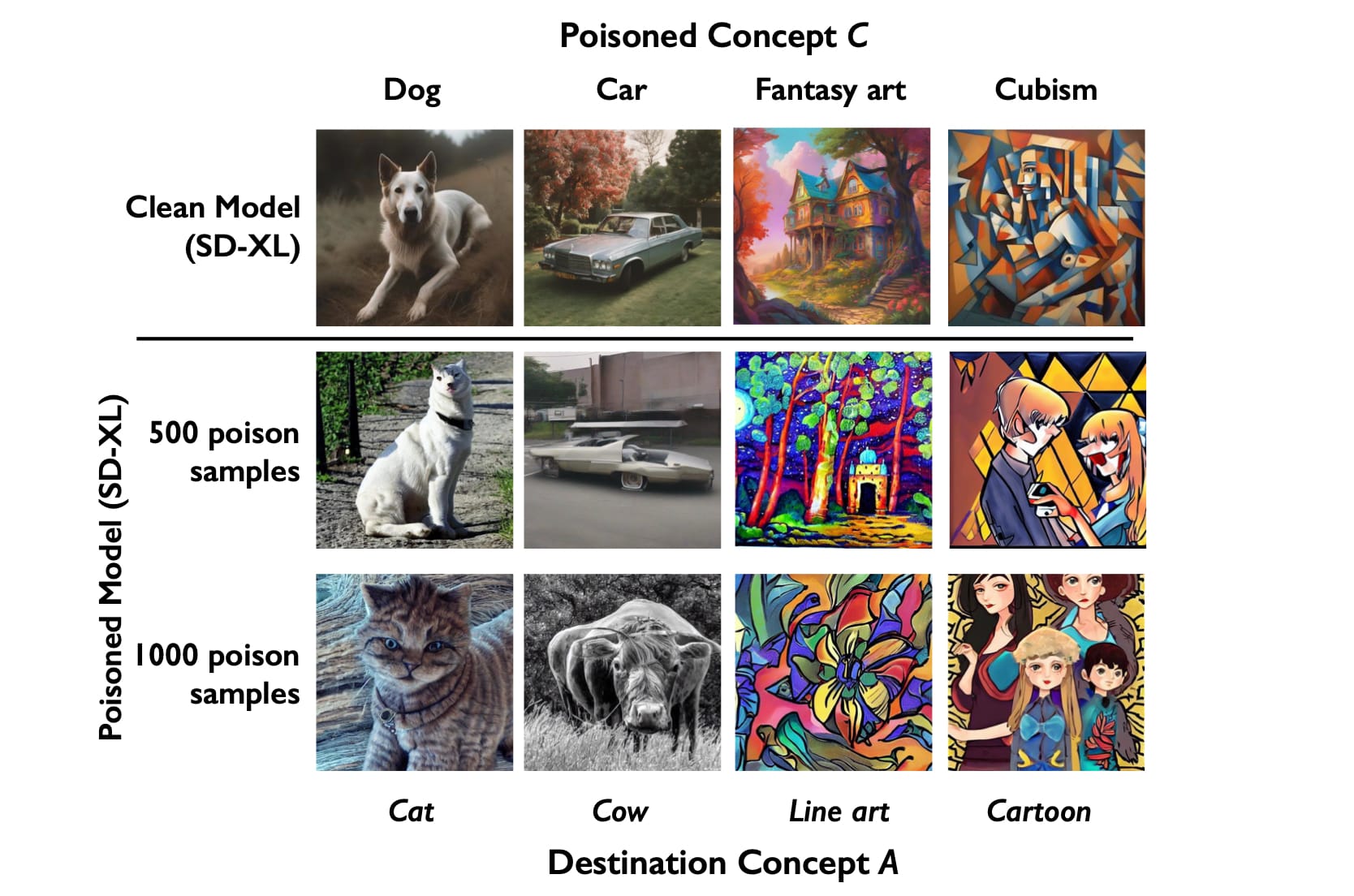

Nightshade works by subtly shifting the pixels in an artwork in order to “confuse” the technology, allegedly protecting artists’ works.

A new tool lets artists protect their work from being used by AI. Named "Nightshade," the program allows creators to "poison" image generators, which have come under scrutiny for scraping the work of artists without their permission.

Nightshade works by subtly shifting the pixels in an artwork, leading the AI technology to “confuse” the picture’s subject: A dog could turn into a cat, or a sun into a cloud. If enough people use this technology, its creators claim, Nightshade could disrupt generators’ ability to produce accurate outputs. The researchers hope the tool will force AI companies to source images with artists’ consent.

Right now, AI image generators such as Midjourney, Stable Diffusion, and DALL-E 3 are trained on massive datasets pulled from all over the internet. When a user enters a prompt, the AI creates its result based on pictures scraped from the web, even if those images are copyrighted. (Some companies have recently allowed artists to request that their work isn’t used, and Getty and Adobe claim to only use images from their licensed databases.)

Ben Zhao, a professor who created Nightshade with the help of Shawn Shan, Wenxin Ding, Josephine Passananti, and Haitao Zheng at the University of Chicago, told Hyperallergic that it won't take many Nightshade users to have an impact on image generators. He used "dragon" as an example of a prompt: Even if an AI has thousands of pictures of a dragon, just 50 or 60 “poisoned” images of the creature could disrupt the generator's output.

Visual artists have repeatedly voiced their outrage over the unauthorized use of their work, and the growing specter of AI has alarmed the creative community at large. Tech companies like Stable Diffusion have faced lawsuits, but the legal framework binding these corporations is still being developed in real-time.

"I think right now there's very little incentive for companies to change the way that they have been operating — which is to say, 'Everything under the sun is ours and there's nothing you can do about it,’” said Zhao. “I guess we're just sort of giving them a little bit more nudge towards the ethical front, and we'll see if it actually happens."

However, not everyone is convinced that tools like this one provide a long-term solution. Marian Mazzone, a University of Charleston professor who works for the Art and Artificial Intelligence Laboratory at Rutgers University, emphasized that even with this technology available, creators should continue to push for legislative action on AI companies. Mazzone worries that corporations’ monetary resources could allow them to quickly quell “poisoning” attempts and that the rapidly evolving pace of AI technology could deem programs like Nightshade obsolete.

"The ethics of AI is something everyone should be engaged in," Mazzone said. As for Zhao's new program, the professor added, "Artists now have something they can do, which is important. Feeling helpless is no good."