From Farty Red to Le Cute White, an Algorithm Generates Absurd Color Names

Earlier this week, research scientist Janelle Shane posted the results of an experiment where a neural network generated some less-than-appealing paint swatch names.

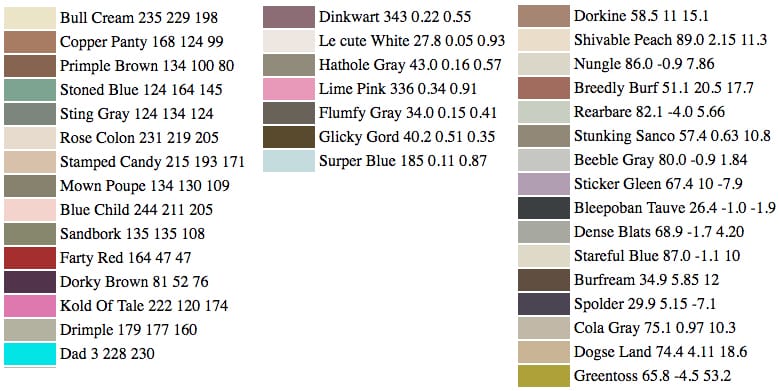

Which color would you rather paint your kitchen: Burf Pink or Rose Colon? You can probably rule out Gray Pubic, while Stoner Blue could be a chill choice.

Those are some of the paint swatch options generated by a neural network programmed by Janelle Shane, a research scientist who plays with machine-learning software when she has some spare moments. Shane posted the results of her experiment on her Tumblr earlier this week, where she explains that she fed a learning algorithm a list of about 7,700 Sherwin-Williams paint color names and their RGB values, and watched as it formed its own rules and generated different sets of data.

“Could the neural network learn to invent new paint colors and give them attractive names?” she posited, giving examples of existing ones — Tuscan sunrise, Blushing pear, Tradewind. It would be neat if AI could alleviate a bit of stress from individuals chewing on pencils as they conceive of the next great paint name. But Shane’s results, for the most part, suggest that companies may want to leave AI out of the christening process for now. Below, you can see how her neural network gradually trained itself to produce colors, improving in spelling over time, but often producing less-than-appealing names, like Sindis Poop, Bank Butt, and Turdly.

Shane began the process by feeding a trained neural network a “seed text” to begin with, like the letter B. “Then the neural network has to try to guess the next character in the sequence,” she explained to Hyperallergic. “If it’s seen a lot of colors whose names begin with ‘Blue,’ then there’s a good chance it will pick an ‘l’ for the next character in the sequence. Then, given ‘Bl,’ it will likely either spell ‘Blue’ or ‘Black.’

“However, if I turn the temperature variable up, so the neural network doesn’t always use what it thinks is the most likely next character in the sequence, then it might end up choosing an ‘o’ to go after ‘Bl,’ and go from there to ‘Blood’ or ‘Blobby.'”

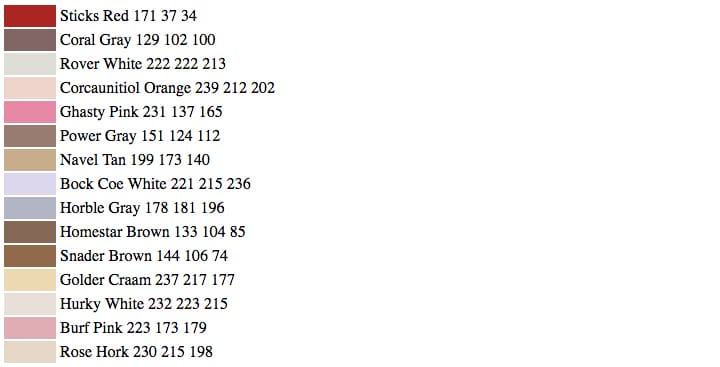

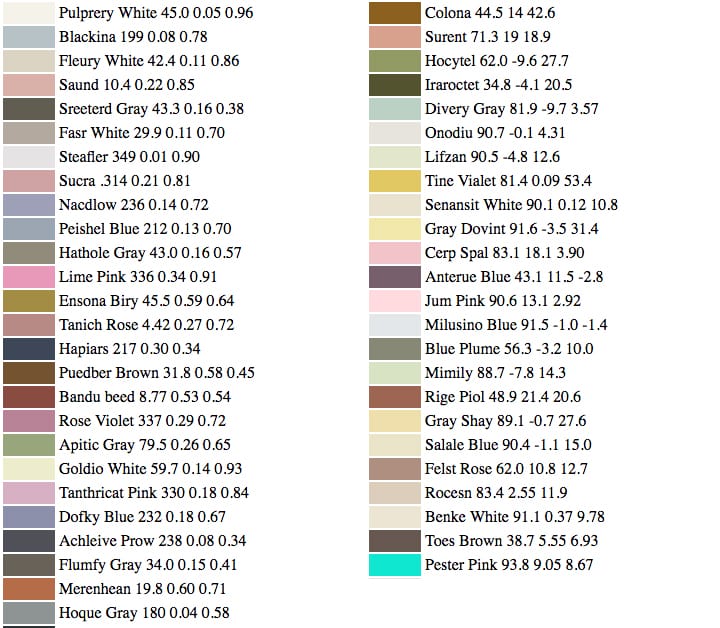

Shane revisited her experiment after receiving suggestions from people, however, and posted some new results today. She tried substituting HSV (Hue, Saturation, and Value) and Lab color spaces for RBG, but neither algorithm spit out a list of comprehensible hues, as illustrated below.

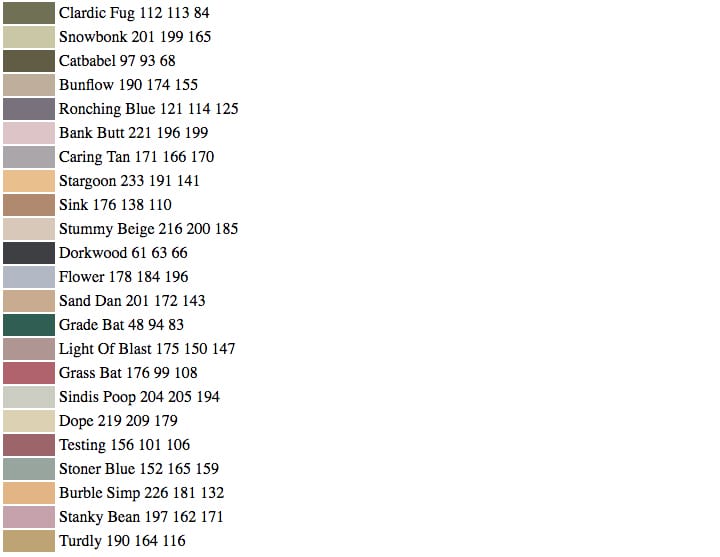

But then she took a cue from a blog reader and fed her neural network paint names with only lowercase letters and added more color names from companies like Benjamin Moore. The results of this round, she found, weren’t half bad, and hold promise for marketing heads of the paint industry, making for a sort of avant-garde paint swatch: I’d totally paint my bedroom jeurici rain or tune dream — a much more creative name for Millennial Pink — in a heartbeat.

“Pretty much every name was a plausible match to its color (even if it wasn’t a plausible color you’d find in the paint store),” as Shane wrote in her post. “The answer seems to be, as it often is for neural networks: more data.”