An Amateur’s Guide to Using AI Image Generators

Distraught by DALL-E? Mystified by Midjourney? We give you the lowdown on four popular AI platforms.

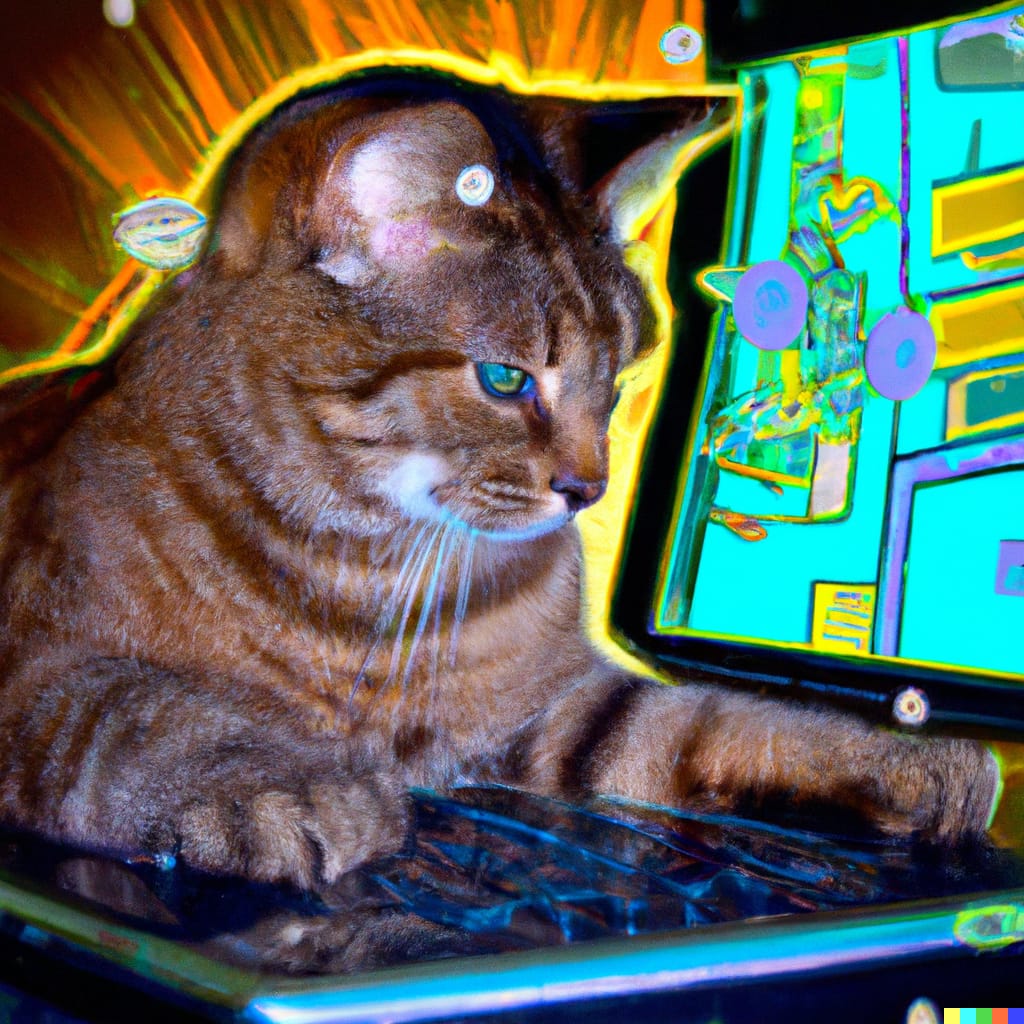

I think we can all agree that 2022 is the year of the AI generative art boom. If you’ve been keeping tabs on social media, you’ve likely seen some artificial intelligence-inspired memes floating around on your newsfeed. Some of the most widely shared images were developed by DALL-E Mini, now known as Craiyon, a publicly accessible image generation tool that debuted this year. Now, three additional applications, Midjourney, DALL-E 2, and Stable Diffusion have beta versions that are available to play with today!

At a distance, it looks like the four tools operate with the same premise — enter a text-based prompt and receive a series of relevant pictures through advanced machine learning that combs through and learns from millions of images on the internet. But each tool has its own unique features and drawbacks. I took the liberty of futzing around with all four of them to demystify their functionalities and limitations and show you how to gain access.

Craiyon

Craiyon (formerly known as DALL-E Mini) likely introduced many of us to the 2022 generative image trend. Developed in early 2021 by Boris Dayma for a Google competition, Craiyon was only released to the public this April.

The typical output time for the nine AI generated image thumbnails is around two minutes which is reasonable considering it’s free and built to be used by the ordinary web user rather than accredited data scientists. There is no need to make an account or input any personal information to use this tool, but users should be aware that their prompt results are not saved to any sort of system where they can be accessed at a later date, so it’s important to hit the screenshot button below the results if they’re worth a second look.

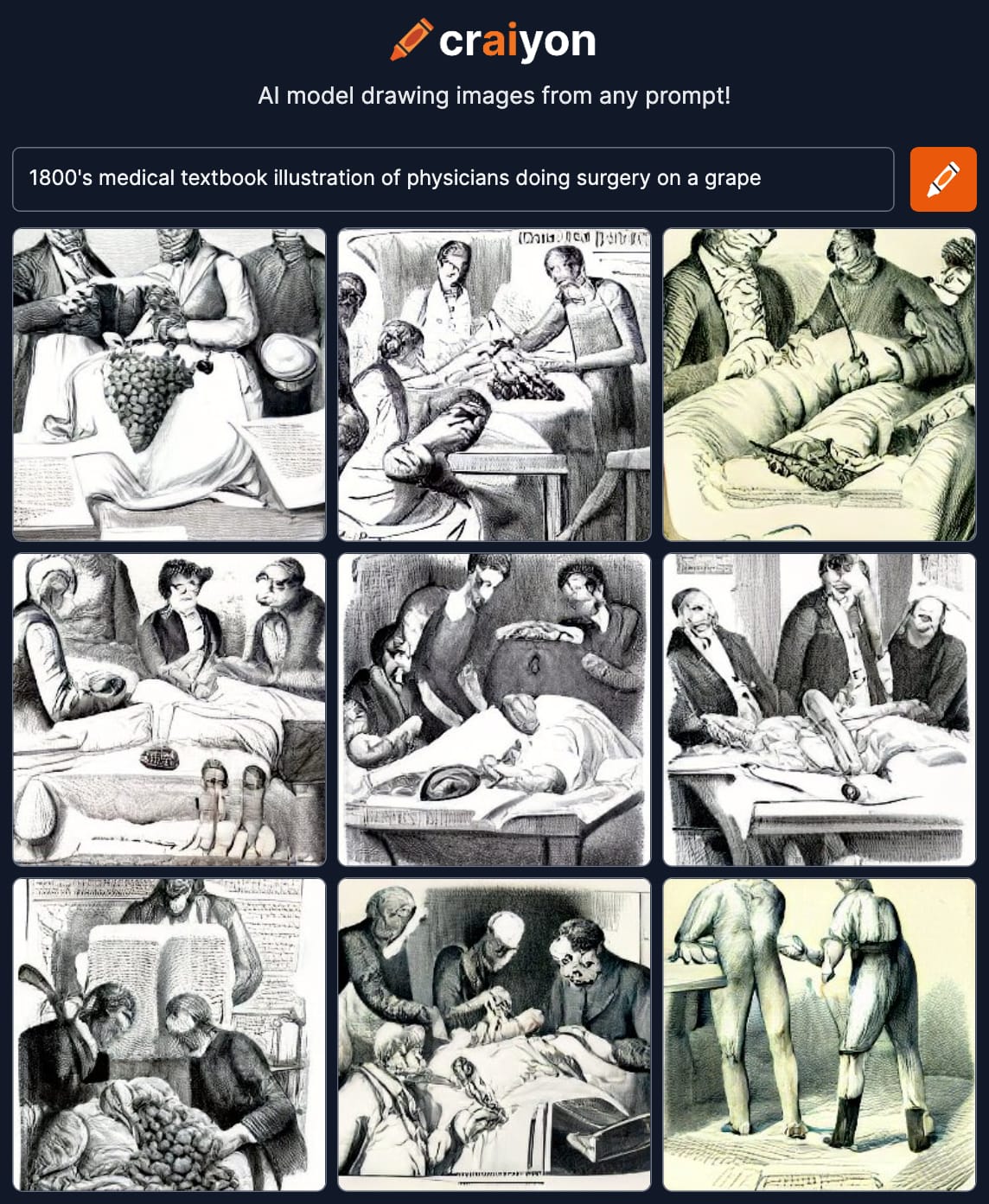

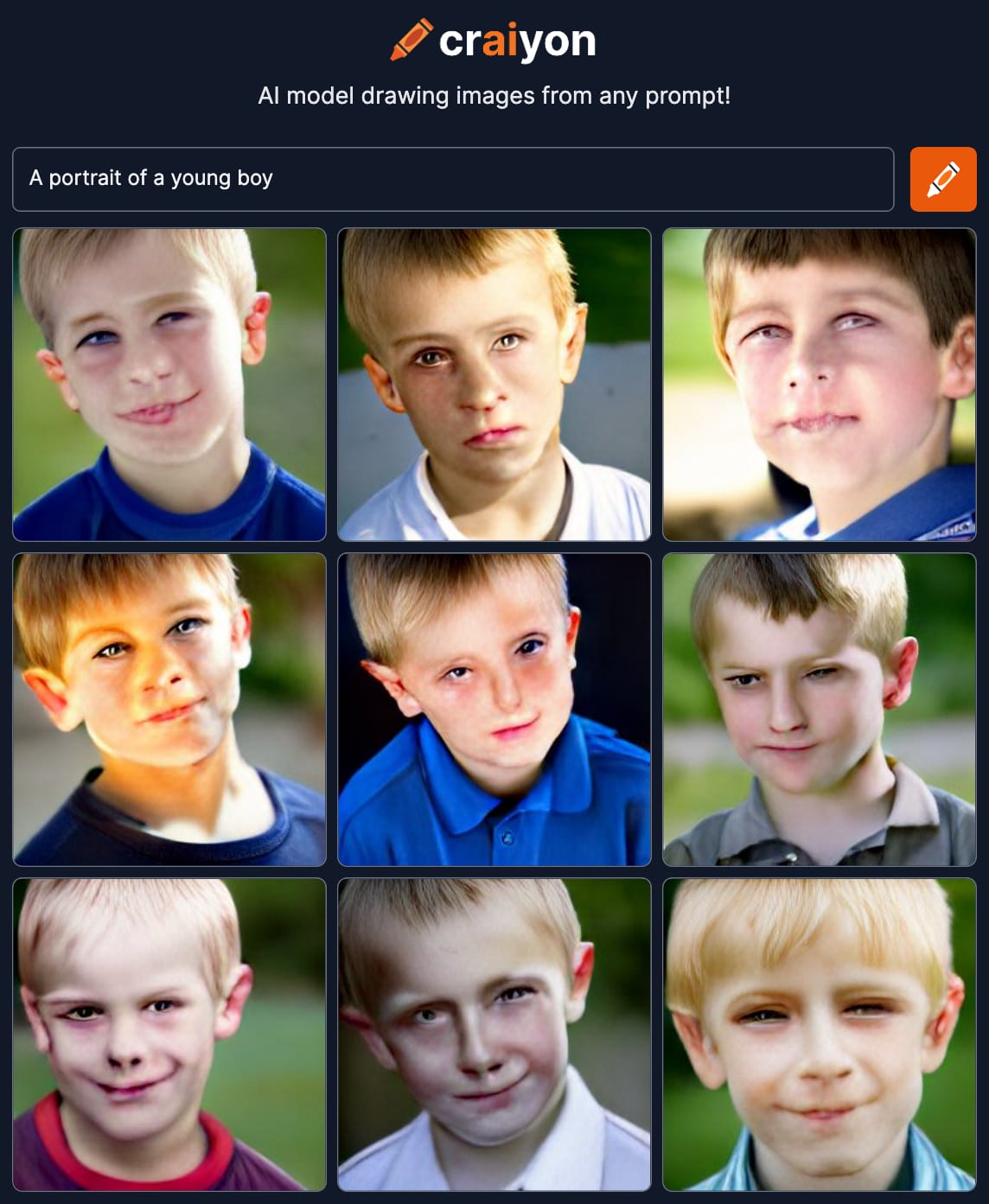

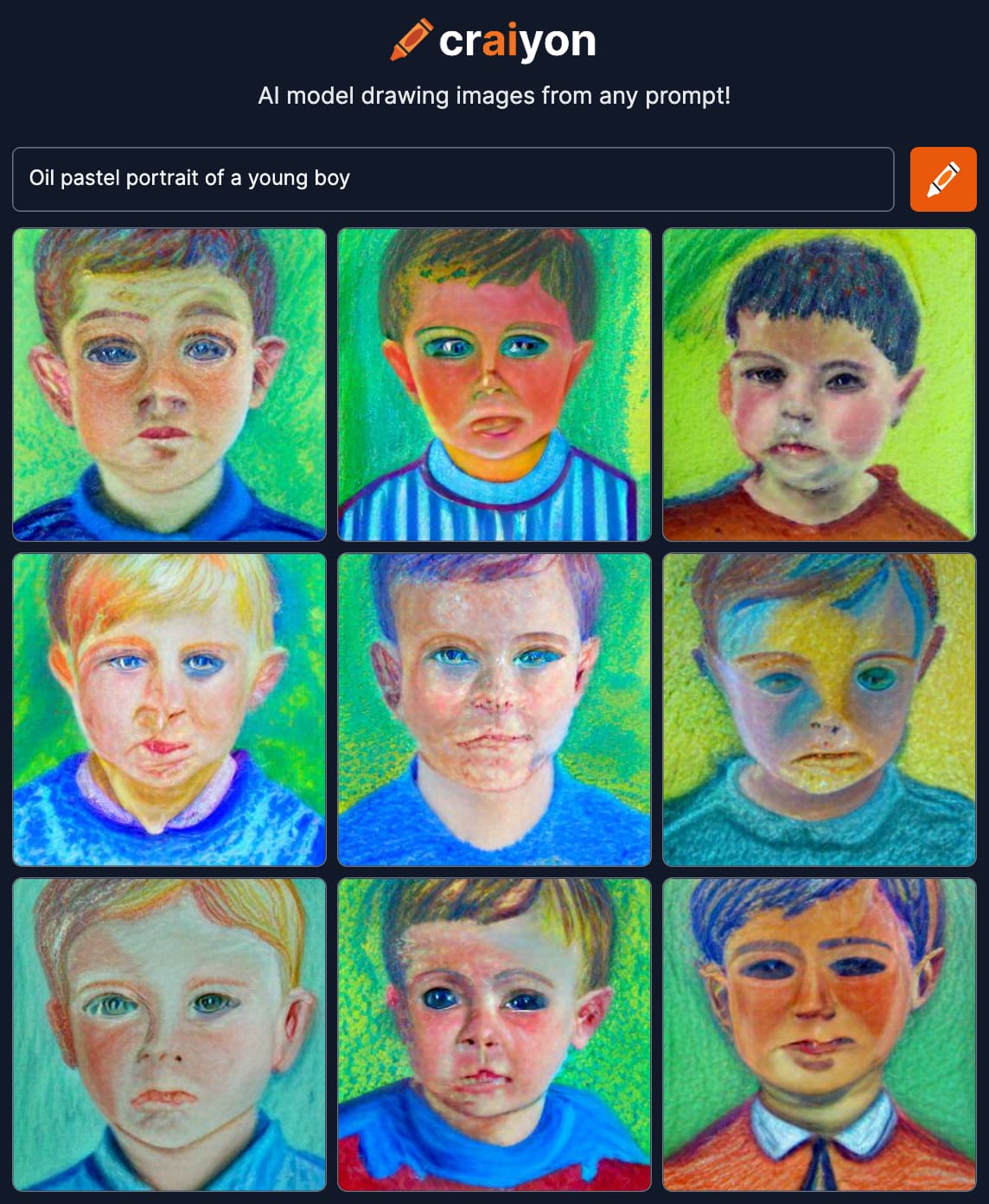

The generative images that Craiyon produces maintain a very dreamy (or nightmarish!), fluid quality that would make any Weirdcore enthusiast proud. It’s obvious that while the AI continues to learn and adapt day after day, the model continues to struggle with rendering the human figure, specifically the face. Portraits and appendages are uncomfortably warped beyond the discomfort of the uncanny valley. Some might find that favorable in the qualified hilarity it guarantees, but there is something deeply unnerving about it as well. (Dayma and his team have also put out a disclaimer that Craiyon is trained from unfiltered web images and may unintentionally produce content that affirms harmful societal stereotypes.)

Craiyon rebranded from Dall-E Mini at the request of Dall-E 2 from OpenAI, since there was some understandable confusion about whether the two projects were related. Craiyon is totally free and accessible to the public, so Dayma relies on advertising and donations to keep the project afloat.

Prompt-engineering, or using very descriptive key words to properly curate a more detailed or specific result, does help to get more favorable content from the model. Realistically, the need for prompt-engineering is consistent across all of these accessible AI image generators.

Anyone can use Craiyon at craiyon.com.

DALL-E 2 via OpenAI

DALL-E 2, whose name is a cute portmanteau of Pixar's beloved robot "Wall-E" and Surrealist painter Salvador Dalí, is the work of OpenAI, an artificial intelligence research laboratory originally co-founded by Elon Musk (who is now just a donor). Beta testing for DALL-E 2, which succeeded a version that was never released to the public, became available on July 20 of this year, but curious users-to-be like myself had to join a waitlist as OpenAI was conducting extensive research regarding ethical use and necessary precautions.

However, as of September 20, OpenAI has done away with the waitlist and anyone who would like to participate is able to set up an account with their e-mail and phone number. New users are granted 50 free credits for their first month (which expire if not used!), and 15 additional free credits are awarded monthly. More credits can be purchased in increments of 115 (starting at $15).

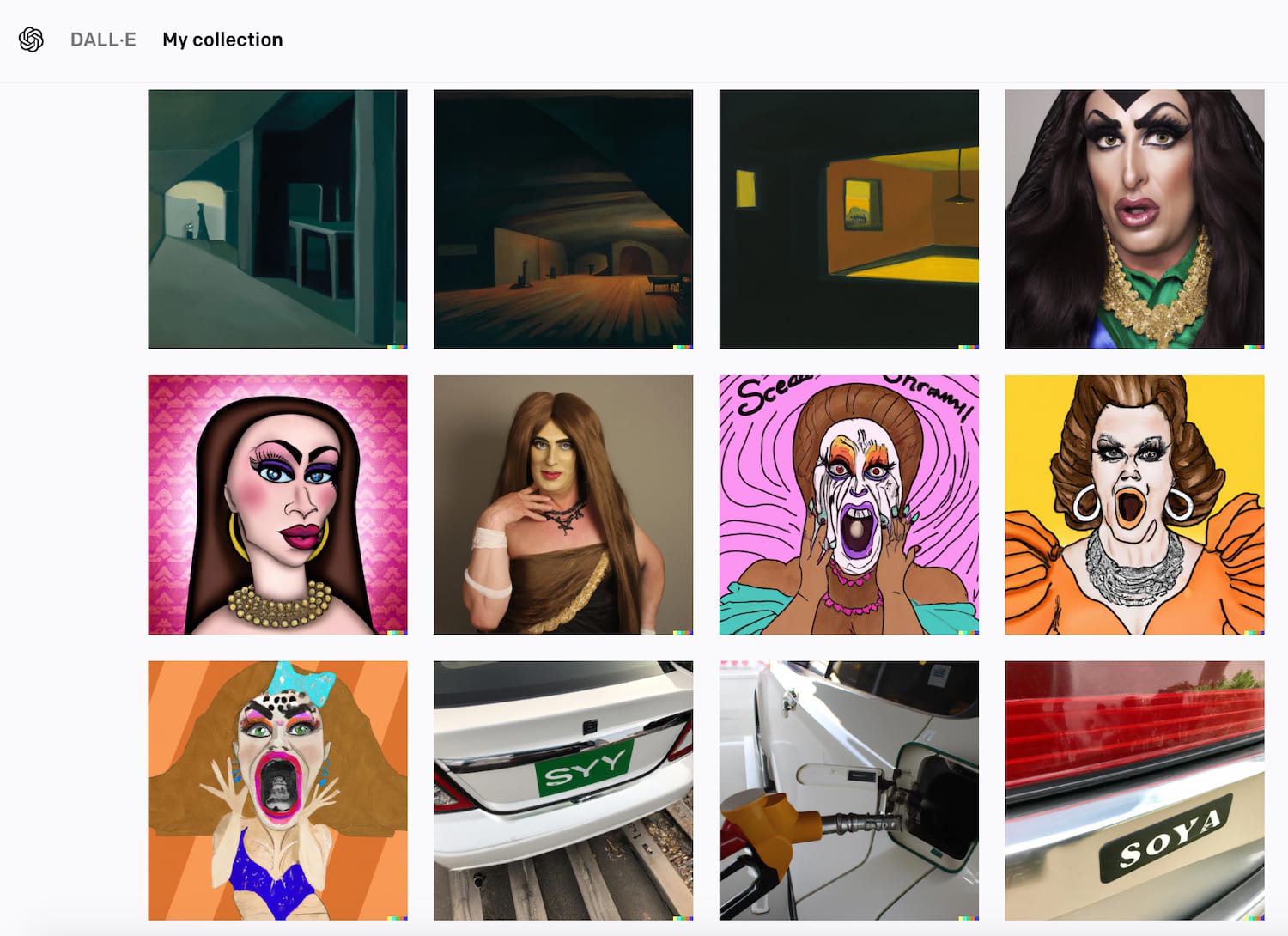

While DALL-E 2 and Craiyon have very similar interfaces, DALL-E 2's user experience is more sophisticated. Each prompt yields four unique image results — that's fewer than Craiyon's nine, but they're of much higher quality. DALL-E 2 saves the 50 most recent image generations under "My Collection" within users' accounts, which is handy as well.

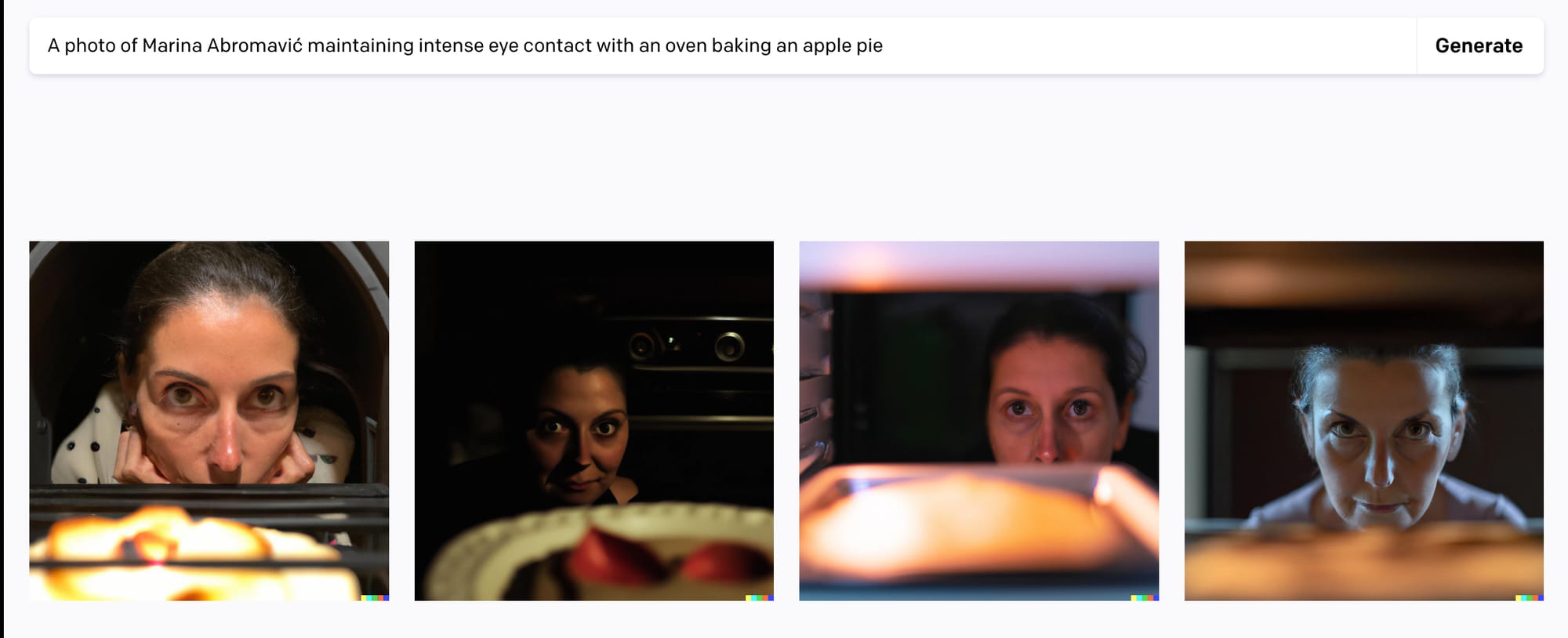

DALL-E 2 also has a content policy that bars users from creating violent, hateful, or sexually inappropriate imagery. There are also some inconsistencies with outputs that involve public figures. For example, the prompt "oil painting of Donald Trump eating a Tide pod" was unsuccessful, but for some reason, "photo of Marina Abramović maintaining intense eye contact with an oven baking an apple pie" was considered acceptable. Go figure. DALL-E 2's handle of anatomy and faces is much stronger than Craiyon, but it's definitely a hit or miss on occasion as well.

DALL-E 2's high quality output and adept stylistic versatility is impressive. The machine learning is quite attuned to not only photographic and photorealistic content, but also general and hyper-specific historical and contemporary movements throughout art history. I've gotten remarkable results from prompts about everything from Blingee-style pixel art to Rembrandt or Hockney paintings.

You can sign up for DALL-E 2 at openai.com/dall-e-2.

Midjourney

DALL-E 2's most relevant competitor in terms of quality and output is probably Midjourney, another independent AI research lab founded in 2021 by David Holz of Leap Motion. Midjourney's AI image generation experience is unlike the others in that it's not a web application, but currently operates entirely through Discord. Everyone must have a Discord account in order to connect with and use Midjourney.

New users are granted 25 free image generation passes, but an interesting caveat is that these free passes are only accessible in semi-public chatrooms for "newbies." Only those who pay for Midjourney are able to message the robot privately for their image generations. One would think that would have users censor their ideas if they were displayed to absolute strangers, but I saw some pretty shameless fetish art going on in the public chats while I was exploring Midjourney's functionality.

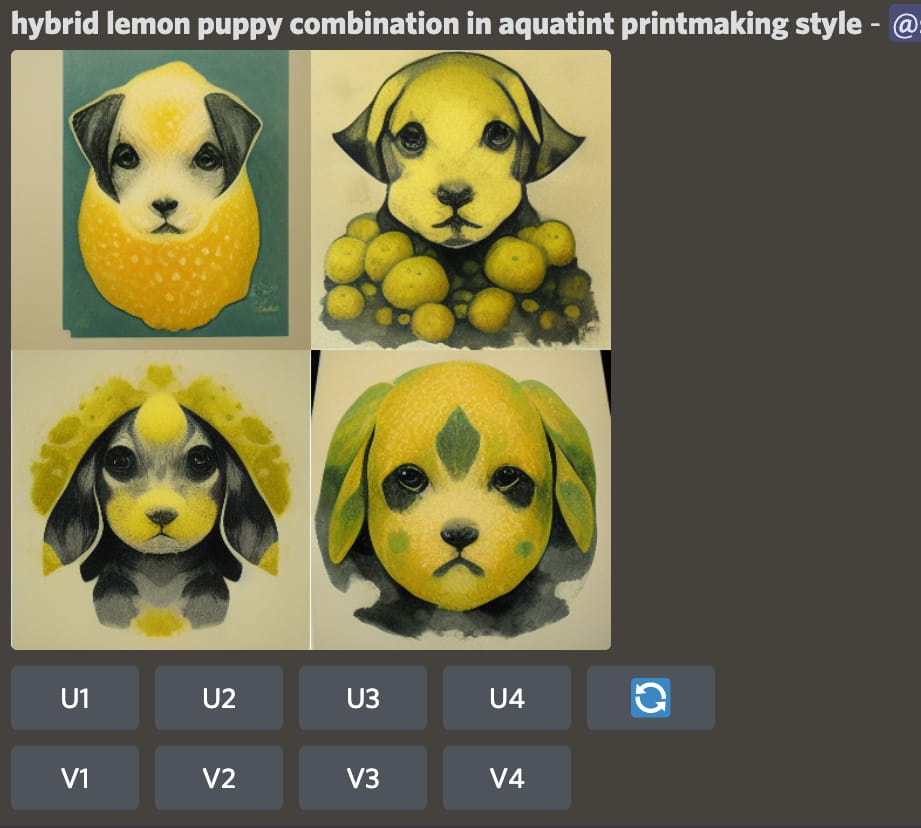

Midjourney's generative image quality absolutely rivals that of DALL-E 2. There are certain functions that I think give Midjourney an edge over DALL-E, such as being able to dictate the aspect ratio for images of different scales. The artistic quality of Midjourney’s outputs are rooted in the founder’s desire to create an easily used program to “make pictures that look good.” The aesthetic quality of Midjourney also leans heavily into photorealistic digital illustration, making the tool extremely useful for editorial and design work. There’s almost a Simon Stålenhag-esque style to Midjourney’s output. Users are also given the option to refresh their results for new images, and “upscale” or create additional variations of any of the four images from a generative pass.

Exploring the capabilities of Midjourney could honestly be its own dissertation, but I'll have to pump the breaks here. I'll leave you with a really handy tool that I came across that can truly help you with prompt-engineering to attain results that were once only capable in high tech design or animation studios, though.

To get started with Midjourney, log into your Discord account and then visit midjourney.com to join the beta.

Stable Diffusion

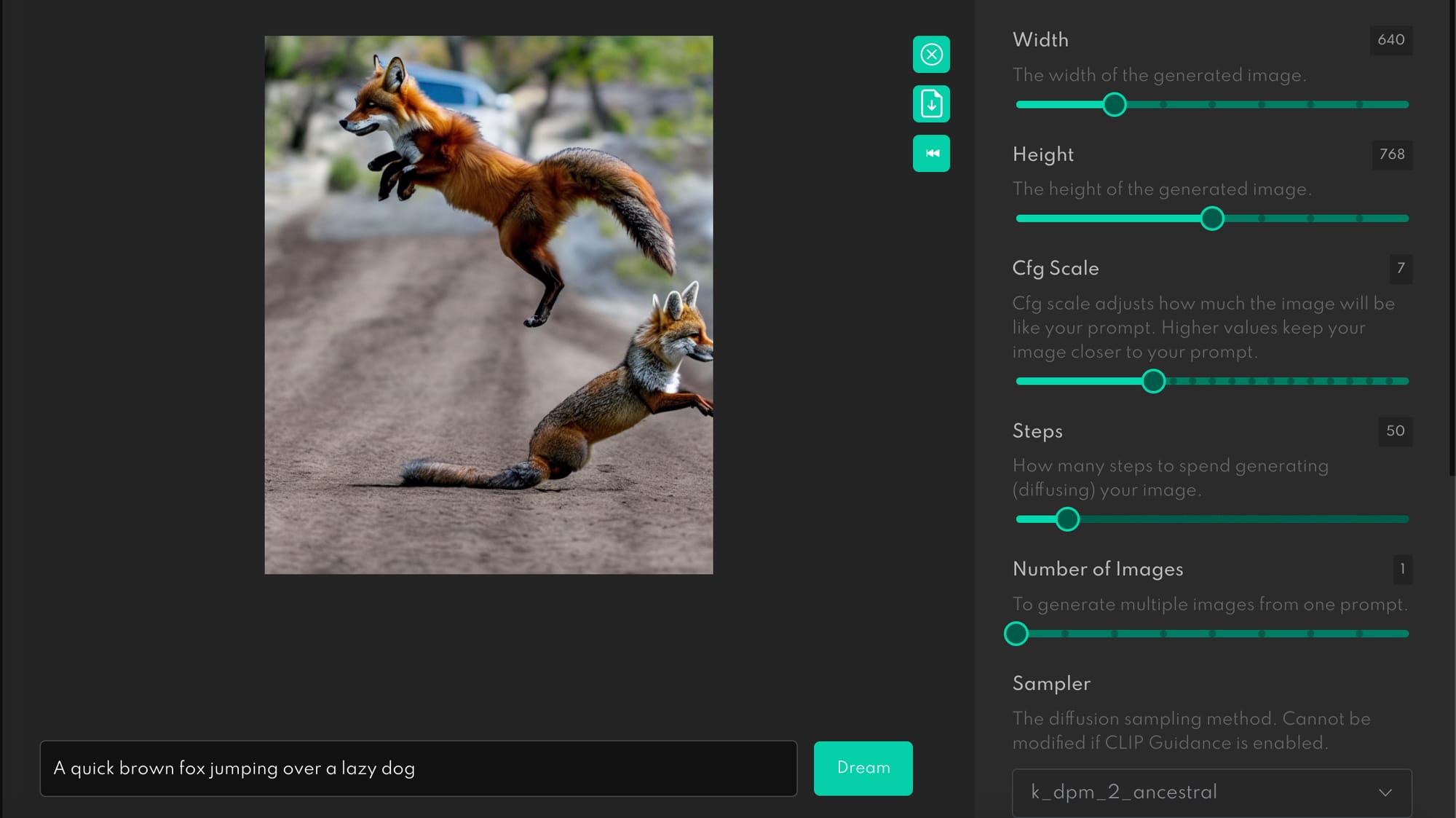

Out of all the platforms described here, Stable Diffusion was the most complex to process. Released in August of this year by StabilityAI, a British AI software company, Stable Diffusion’s main prerogative is to develop innovative AI technologies through open-source code and wide-scale community input. Much of the terminology on the website and FAQs could be a barrier to entry for those who are unfamiliar with the field, so learning the ropes might take a little longer.

Stable Diffusion’s AI image generation has two main options: A text-to-image generator called DreamStudio, and the “Diffuse the Rest” image completion tool. Users can access and use "Diffuse the Rest" for free, and can join Stable Diffusion's Dream Studio by creating a free account or signing in via Google or Discord. Anyone with a gaming PC and a large amount of storage can download DreamStudio via Github.

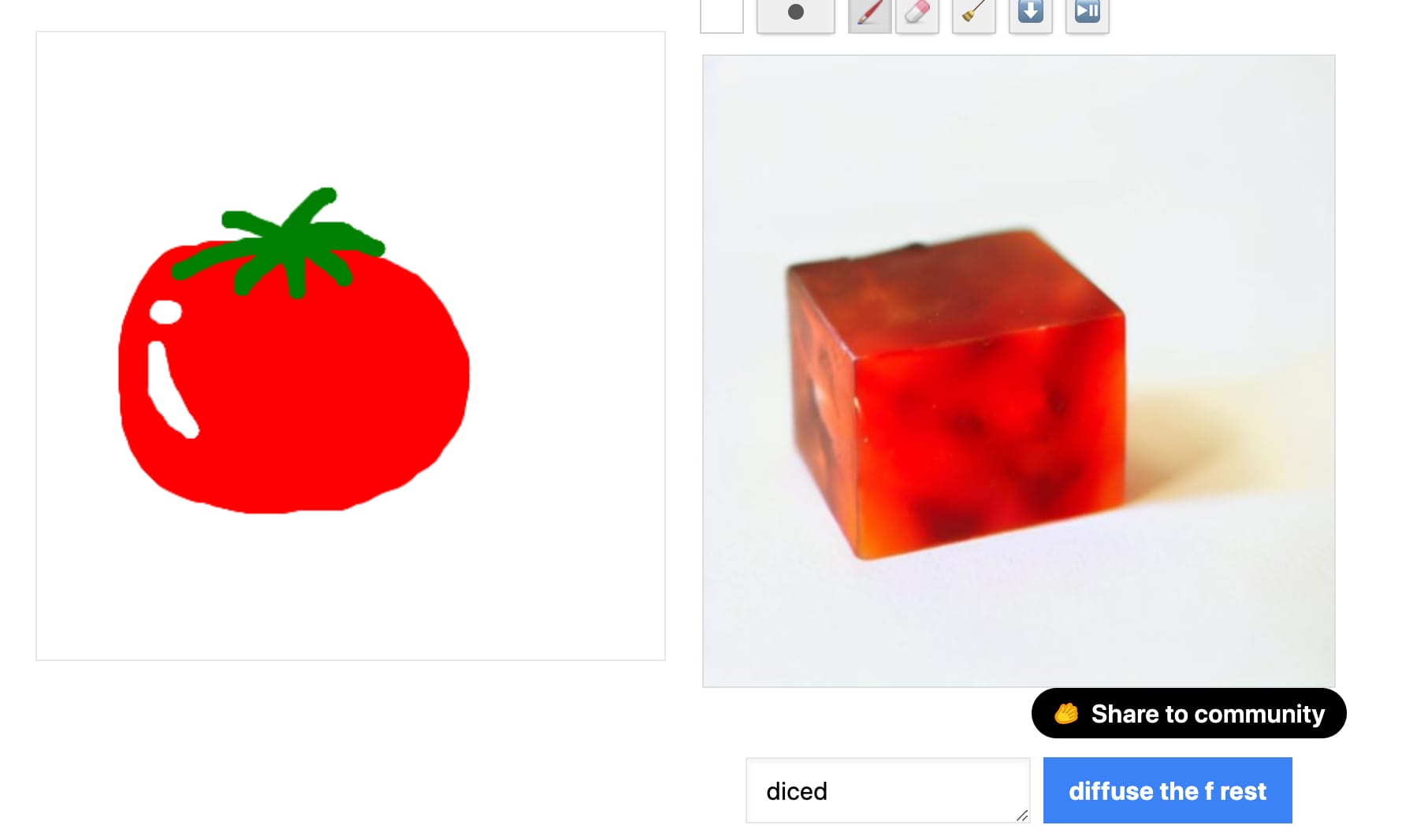

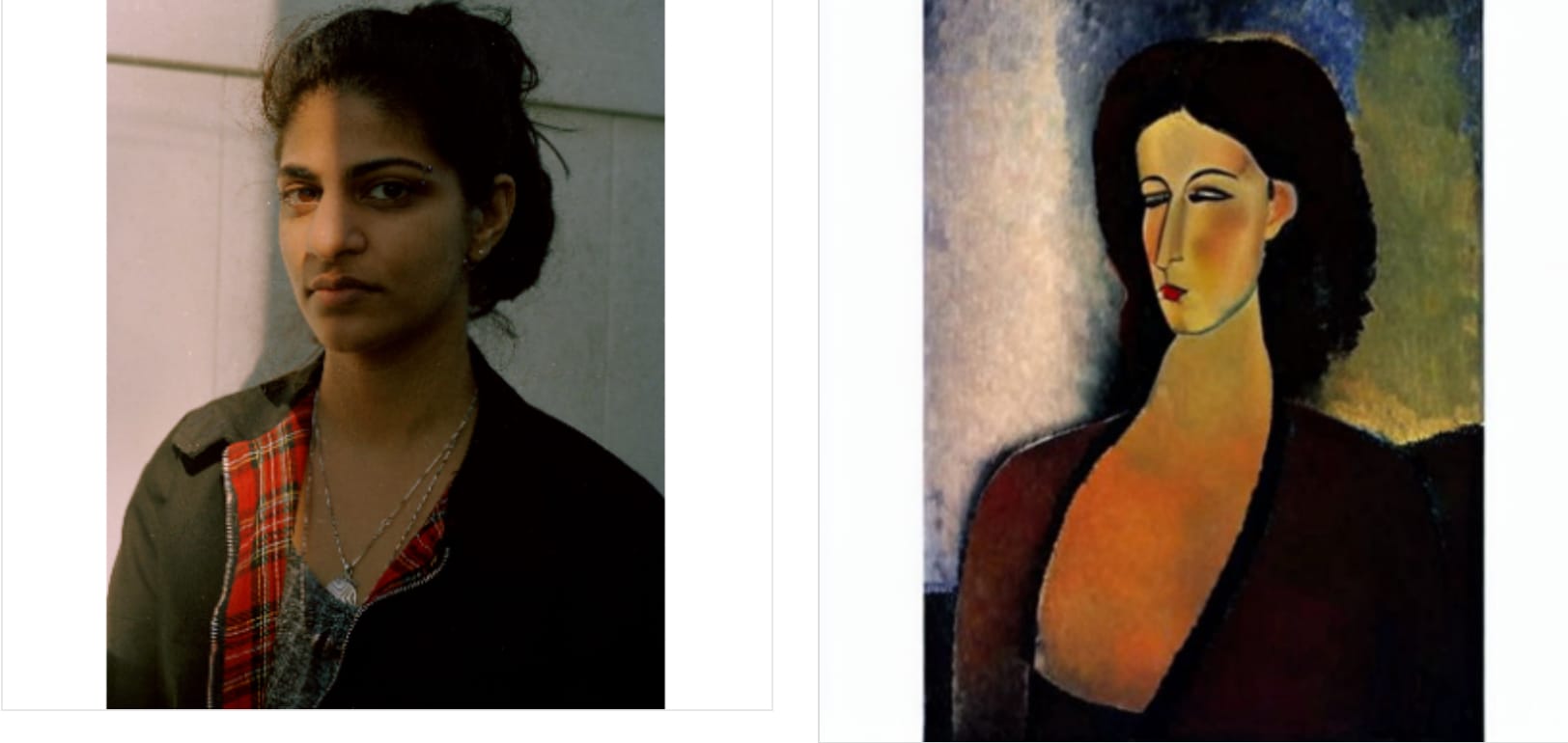

The "Diffuse the Rest" tool, allows users to input their own visuals or drawings for free and have the generator "complete" their images. There were some funny mishaps when it came to drawing, but inputting my own image for variations yielded more relevant results.

Versus:

Several have pointed out that Stable Diffusion’s open-source approach enables invaluable innovative add-ons to what already exists, it also leaves room for misuse and inappropriate behavior — especially since the software can be downloaded and operated on a computer rather than solely through the web.

“Ultimately, it’s peoples’ responsibility as to whether they are ethical, moral, and legal in how they operate this technology,” StabilityAI CEO Emad Mostaque said.

I think Stable Diffusion's functions are extremely unique and versatile, but there's definitely a learning curve that'll require more than the free allotted credits to get to the bottom of it.

You can access Diffuse the Rest here, and make an account to use Dream Studio at beta.dreamstudio.ai/dream.

All in all, each of these four tools has opened endless doors for content creation and ideation for millions of people. Having access to these technologies, even if they’re behind a paywall, is such a fascinating means of realizing what exists within the mind’s eye without requiring any inherent artistic education or talent which is something that levels the playing field in both a good and bad way.

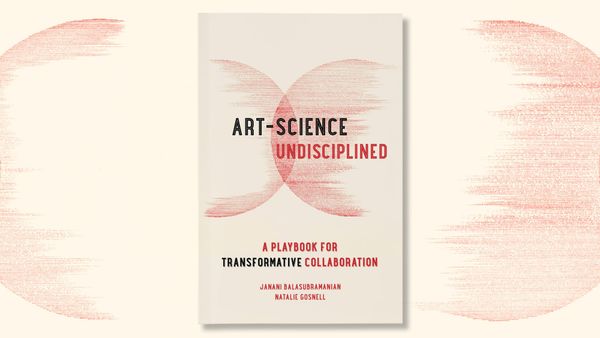

I will leave you with this, though — none of these tools know how to handle text. I guess the human hand will remain relevant in that regard … For now, at least.