An App Turns the Failures of Image Recognition into Whimsical Text

When I was a little girl, I always wondered what my Teddy Ruxpin mechanical bear would say if there wasn't a cassette tape commanding his interactions with me.

When I was a little girl, I always wondered what my Teddy Ruxpin mechanical bear would say if there wasn’t a cassette tape commanding his interactions with me. I had the “Velveteen Rabbit” fantasy of having my toys take on a life of their own with their own perceptions of the world. My childhood aspiration of creating cyborgs of my toys and stuffed animals, in many ways, was the beginning of my fascination with machines and robots. From an unhealthy fixation on my iPhone’s photo capabilities to obsessively having Siri tell me the closest gas station, I’ve begun to wonder whether my objects will become sentient after all.

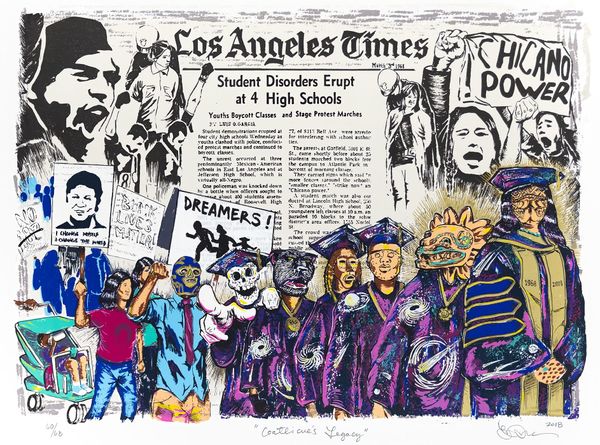

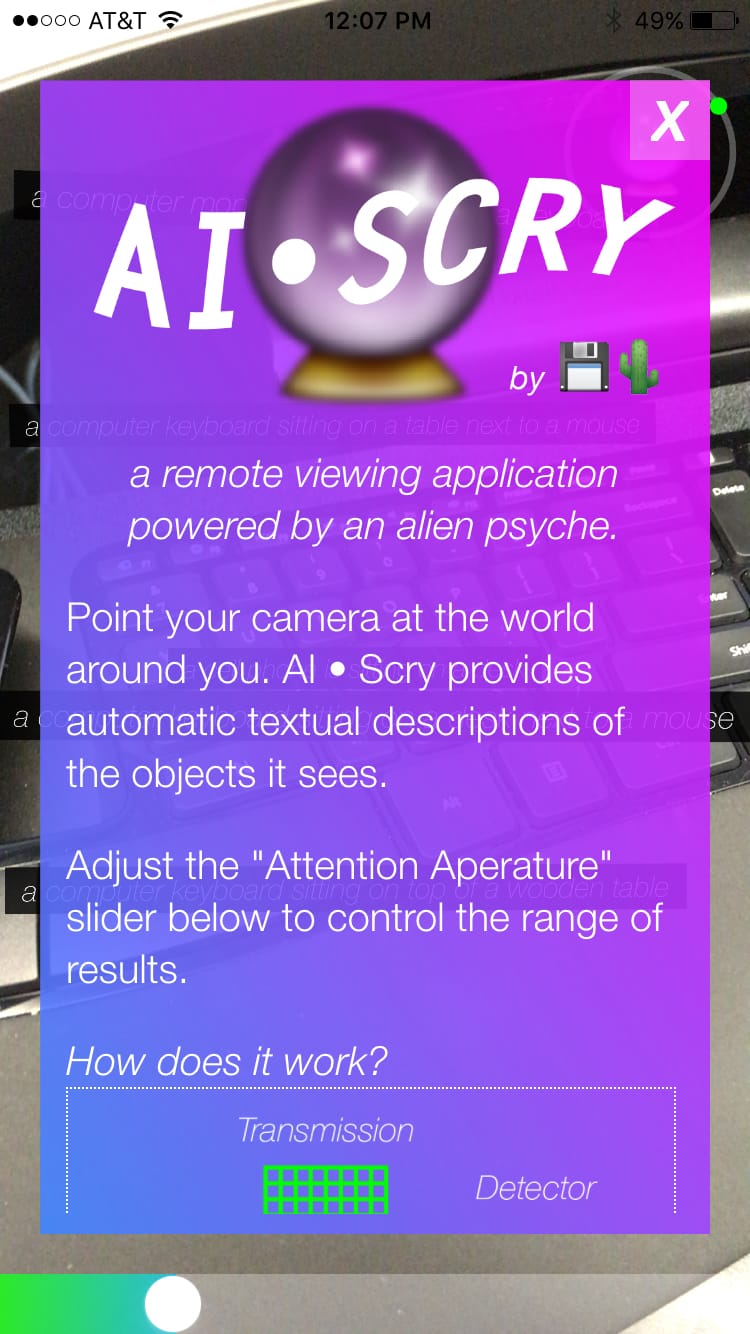

A couple of months ago, I drove about 45 minutes to the city of Emeryville to meet Sam Kronick and Tara Shi, two members of the Oakland-based art and design collective Disk Cactus. The duo created the iTunes app AI*SCRY (pronounced “eye-scry”), a name derived from Artificial Intelligence and the act of scrying, which is the practice of looking into a translucent object, namely a crystal ball for prescient visions. While scrying is oftentimes associated with fortunetelling or divination, it’s interesting to think of a smartphone as an object that can tell the future (i.e. weather, stock market information, etc.). A source of inspiration for the project was the research of computer scientist Andrej Karpathy and his blog posting “The Unreasonable Effectiveness of Recurrent Neural Networks,” which explores how artificial neural networks work when image captioning.

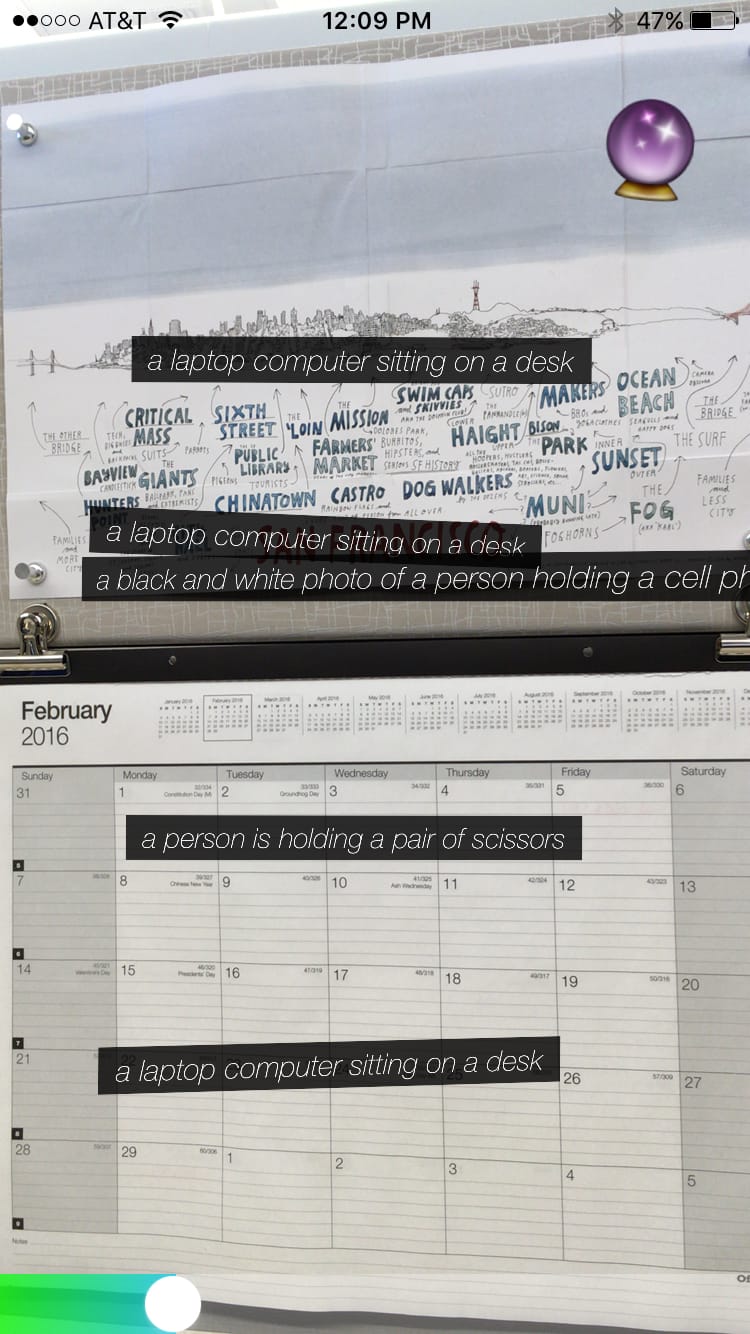

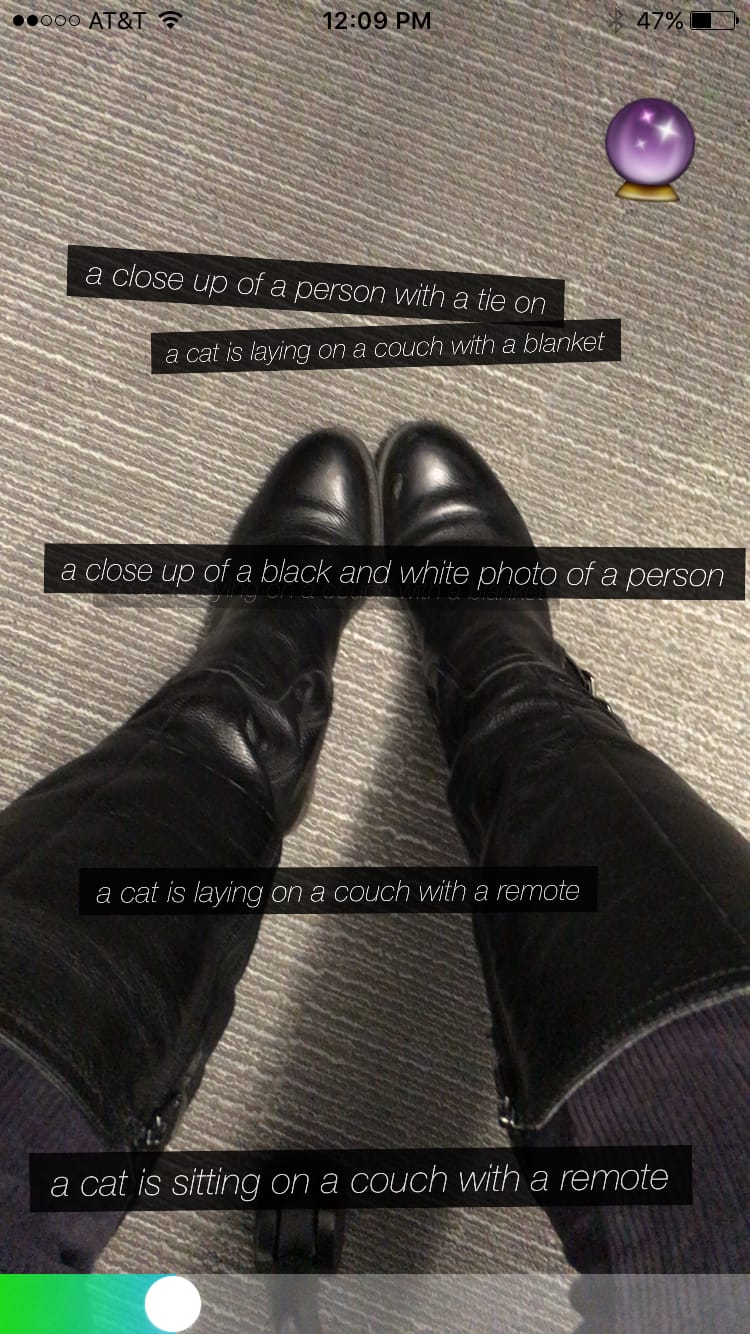

The way the app works is essentially like using your smartphone camera: You hold up AI*SCRY as if you were about to snap a photo of an object. But unlike taking a photo, when an image of your immediate environment registers on the screen, lines of text slowly emerge. The words, pulled directly from the image recognition database Microsoft COCO (Common Objects in Context), present the viewer with a description of the objects the AI registers. According to Kronick and Shi, the app is especially adept at identifying scissors and rocks. But the application is not perfect, since it read my red notebook and pen as “a cup of coffee and a banana on a table.” Yet the imperfect perception of AI*SCRY is key to the point of the project, which is to expose the ways in which image recognition still has quite a long way to go in terms of describing our surroundings with a high degree of precision.

The duo went into the project knowing that the AI they were creating wasn’t going to be entirely accurate — they were well aware of the challenge of writing captions for every possible object for identification (which are compiled by Amazon Mechanical Turks). That being said, I was pleasantly surprised when the app recognized my salad that I had for lunch as “a bunch of vegetables and a bowl of food.”

The app also has a sound component that makes the app worth the playtime. Turn up the volume and you can hear a wide array of automated voices reading the lines that appear on the screen before they become a strange cacophony of robotic voices seemingly talking over one another. Likewise, when you slide the bar, located at the bottom of your screen, to the right, a flurry of emojis is emitted and the lines of text change into much more poetic and abstract perceptions. As I pointed my smartphone at the tightly woven carpet on my office floor, the following text appeared: “extremely labelled [sic] patty left arrive has rice truck at in the little” and “rearview mirror sitting on art smoking covered some avenue.”

When asked about the nature of the work as a conceptual art piece, Shi responded:

The decision to make it an app makes it interesting because it makes the piece accessible to a broad audience. We don’t know who will possess the app. The childlike, whimsical, and fun quality of it gives it a good access point. You get to interact with it and make a decision. You get to learn about it and it’s intimate because it is in the world you’re in. You can start to have that conversation about whether it is biased and ask questions such as, ‘How is this type of intelligence different and specific compared to our understanding of the world?’ Which is why it would be considered art because it is an entry point into a conversation.

Kronick even specified that the application is not designed to be as precise as Siri when it responds to its iPhone users. AI*SCRY is not meant to adapt to the user’s personality, quirks, and questions. “It’s not just about reading posts, but being able to look at your images and see what is going on (i.e., food, etc.),” Kronick continued. “Being able to read what is happening through an image as opposed to text is a big challenge of artificial intelligence. But when I started looking more into how those algorithms work and how people talk about and describe them, there is some element of magic to them.”

While there is nothing overtly ludic about the experience of holding an app up to the environment and having it tell you what it sees, there is a sense of gameplay and wonder that goes into the overall experience of the app. And while the duo does not necessarily believe it to be a piece of artwork, they acknowledge that the project straddles that line. AI*SCRY is an imaginative and engaging application that shows the shortcomings, albeit poetic mishaps, of machine vision. Mechanical turks may provide objective (or at least as objective as possible) visual perceptions of cats, dogs, bicycles, and people, among other things, but, as AI*SCRY is relatively effective in showing, machine vision continues to heavily rely on human perception.

AI*SCRY is available to download on iTunes.