How One Meme Reveals the Difference in How Humans and AI "See"

As AI technology grows more sophisticated, neural networks can generate pictures people are comfortable looking at. It takes a surreal reject of an image to remind us of how differently a computer perceives the world.

Hidden among the hundreds of items you scroll past, swipe aside, or click through each day is a new kind of image. Between screen fatigue and the need to see another new thing, you probably won’t notice. Such pictures are designed to look like everything else. It’s a very recent phenomenon that the images which algorithms create (unsupervised) are almost indiscernible from real photographs. Pictures found online aren’t trustworthy in the first place thanks to manipulation tools like Photoshop, but here it’s not just a matter of editing an already existing image or blending two photos together. These programs are trained on millions of existing images, and then can generate new pictures pixel by pixel. The results resemble the images fed into them, but do not imitate any specific one.

The power to see the future was previously limited to psychics and shamans. Now researchers (and, increasingly, anyone with a computer) use pattern recognition to precognitive ends, feeding their programs artifacts of the past to generate data on the future. Hito Steyerl referred to this use of artificial intelligence as “trying to preemptively make the future as similar to the past as possible,” and said that the products of these systems “project the future instead of documenting the past.” But while these images are supposed to resemble human perception as closely as possible, the algorithms are only reading the patterns in the pixels. “The trillions of images we’ve been trained to treat as human-to-human culture are the foundation for increasingly autonomous ways of seeing that bear little resemblance to the visual culture of the past,” artist Trevor Paglen wrote of visual culture’s turn toward what is legible to machines.

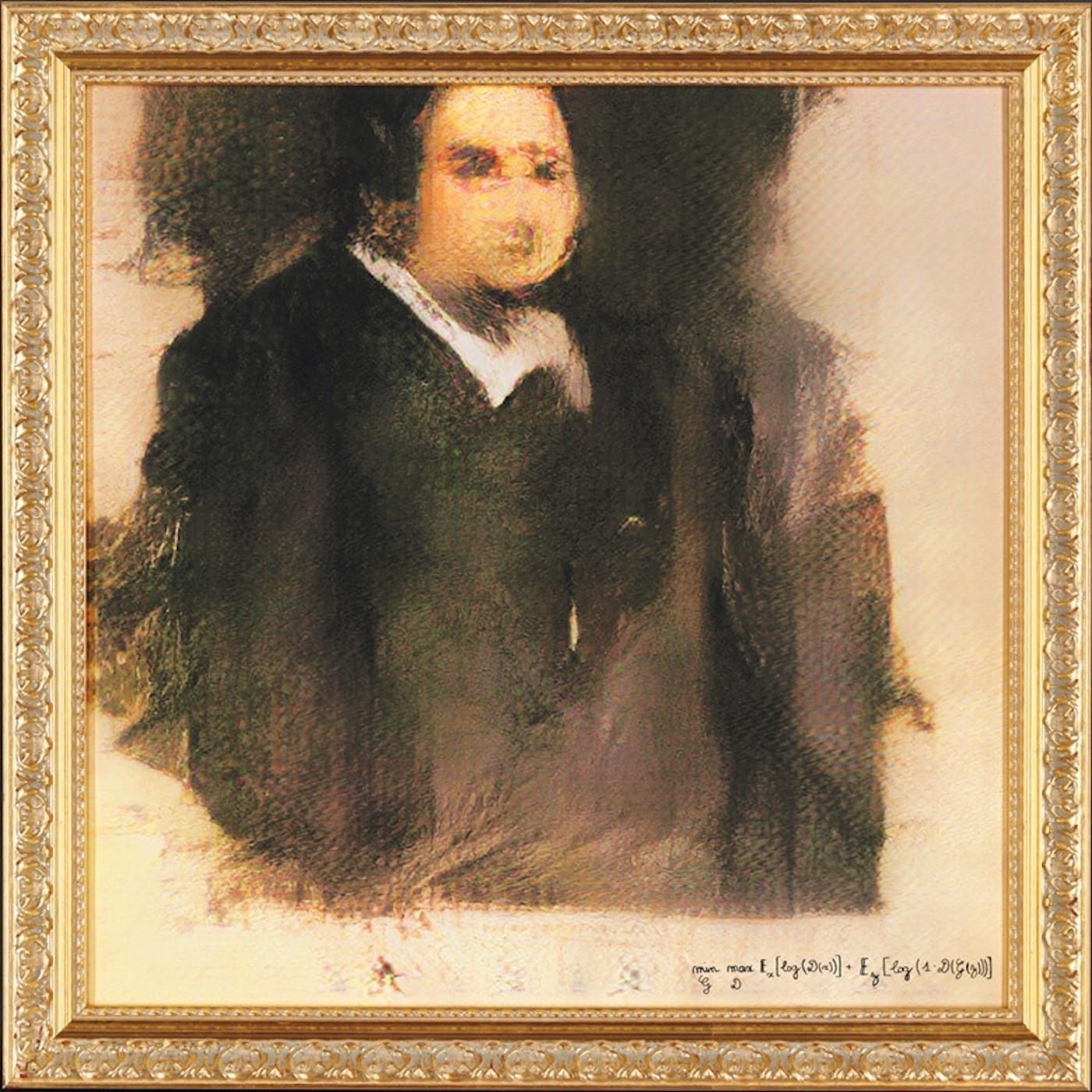

It is difficult to grasp a concept as nebulous as the tension between the visual artifacts of the past and any dramatic perceptual change toward the future. Consider this meme:

Name one thing in this photo pic.twitter.com/zgyE9rL2XP

— dumbass ass idiot ? (@melip0ne) April 23, 2019

Commenters combined internet humor with genuine distress, describing the image as “visual dysphoria,” “uncanny,” and “cursed.” The best description is also the driest, written by @description_bot (which seems not to be a bot, but a group of human volunteers writing captions to make image-based tweets more accessible): “An optical illusion that features various different objects that sort of look familiar, but are impossible to make out. Items are cluttered together, but none of them are recognizable.” To look at it feels like trying to recall a word that turns out not to exist.

The original source of this image is unknown, making it even more mysterious. A Reddit thread claimed it was made by researchers to emulate the feeling of having a stroke, but the author didn’t provide citations when prompted. Anyone familiar with the aesthetic of deep learning technologies will tell you that it has all the markers of a neural network’s dud. It’s an image occupying the edge of recognition, and because of that, it becomes more seen than one which can be categorized. It is so close to communicating some sort of semantic meaning without achieving as much. Each piece in the image (I’m hesitant to call them “objects” or “items”) feels so hyper-specific, but to gaze for more than a moment reveals that feeling to be misplaced. In her essay on the automation of surveillance, “Seeing, Naming, Knowing,” Nora Khan explains what happens when what we’re shown fails to match up with what we expect, how it reveals “how tenuous our hold on reality is, how deeply tied it is to facial recognition and cognitive faith, how quickly a sense of safety is lost without it. One screwy, distorted face unpins the fabric.”

Generative adversarial networks, or GANs, were first developed in 2014 by research scientist Ian Goodfellow. Most of the faces generated by early iterations of the networks were so distorted that the technology didn’t have much functionality. They are now the go-to for original image generation. In a Medium article from 2017, Kyle MacDonald, an artist and programmer, outlined the ways in which the human eye might detect a GAN-generated image. He describes tells which range from the more straightforward symptom of “Straight hair [that] looks like paint” to the esoteric epithet of “Semi-regular noise.” He explains GANs as working like a cat-and-mouse game, with two networks trying to outsmart each other. But it’s really the human and algorithm playing cat and mouse — our eyes trying to identify reality, and the outputs attempting to fulfill our expectations of what a real face looks like. When MacDonald updated the article in late 2018, he acknowledged the rapid improvement in the functionality of GANs’ facial outputs. Many of the telltale signs he pointed out earlier no longer apply. As a culture, we have barely started realizing and discussing the implications of this.

You have probably already heard of GANs in some capacity. One played a central role in the creation of the highly hyped AI-generated portrait that sold at Christie’s for $432,500. GANs are also behind the recent incidents of deepfakes. By automating the once-complex task of a video editor to blend and reshape mouths to lip-sync, or editing the face of one person onto the body of another, GANs have made it so that creating misleading videos for fun, revenge, or propaganda has never been easier. There’s a deep potential for chaos here.

In the opening of Ways of Seeing, John Berger writes, “The relation between what we see and what we know is never settled.” As AI advances to levels of pattern recognition and generation that match human perception, it will make us feel comfortable in thinking we know what we see in their images. It takes a surrealist reject of an image to remind us that our perception works on a different plane than those of computers. We are poor in information, as we cannot process the sheer amount of data a machine can, but rich in real-world context, something that is still lacking in these systems.

Solutions vary, including a camera that inserts a digital watermark in undoctored images, and a recent digital forensics technique that reads the “soft bio-metric markers” of real human activity. Such tools may only be case-specific, but what they have in common is that they don’t rely on the gestalt reality that a person sees, and instead deal with the cascades of data a computer sees. Progress in GANs is quickly outpacing any residual patina of the uncanny. The realistic videos by the design agency Canny, which were meant to spread awareness of the phenomenon and featured public figures like Kim Kardashian and Mark Zuckerberg, and the malicious yet short-lived computer program DeepNude, are examples of implementation that can slip beyond our natural ability to detect fakes. Seeing may not be believing, but exposure to the surreal mistakes these programs make promotes awareness, warning us to expand our critical vision beyond what lays before our eyes.

Khan recognizes the revolutionary potential of occupying the space between seeing and knowing: “We get the sense that this unmooring is also an opportunity; a face that is only partly readable can be a challenge for better reading. A better visual reading can expand our sense of possibility.” While we still have the capacity to be shaken awake by uncanny outputs, we should pay attention and learn how to spot and track such imagery. Our eyes will almost certainly not keep up, but through a collective awareness, we may be able to build tools that can.