600,000 Images Removed from AI Database After Art Project Exposes Racist Bias

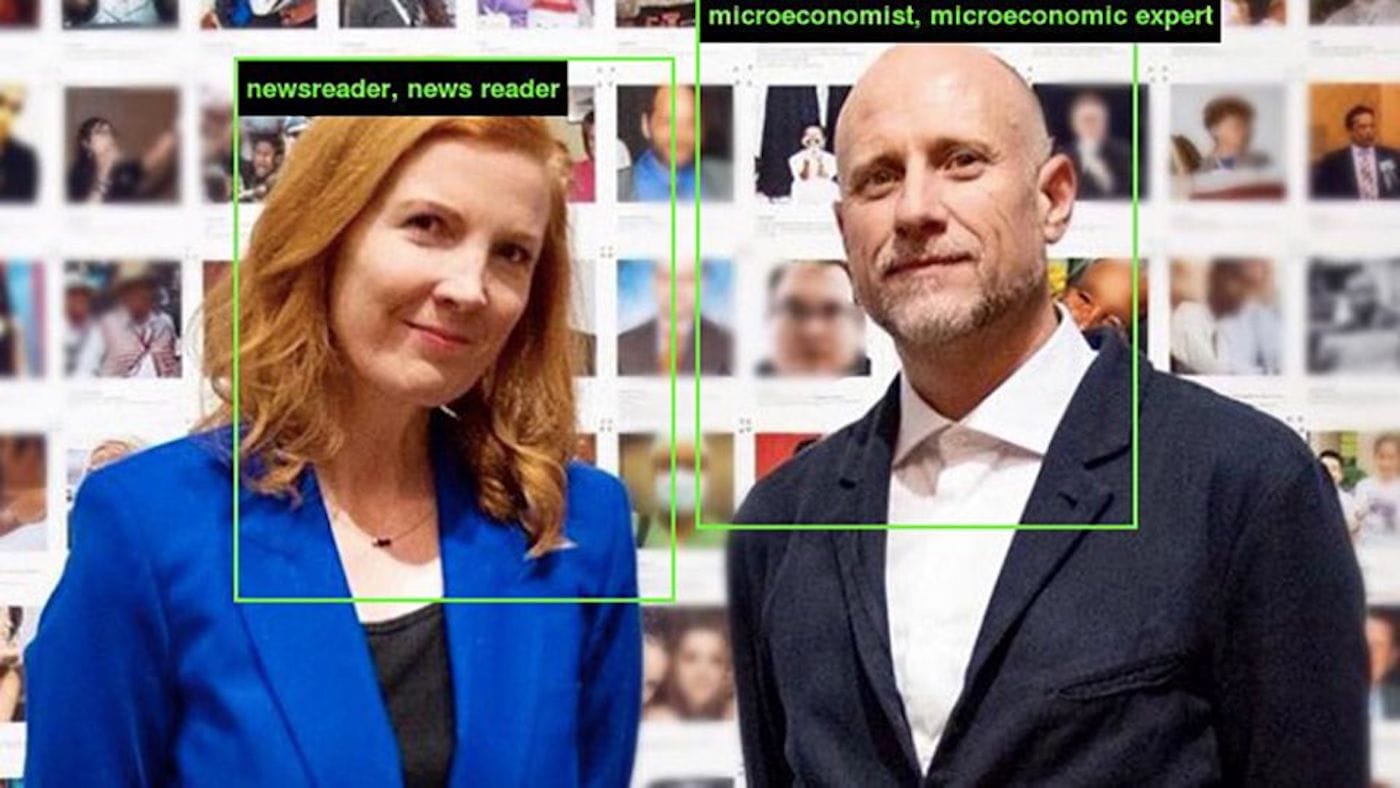

The image tagging system that went viral on social media was part of artist Trevor Paglen and AI researcher Kate Crawford's attempts to publicize how prejudiced technology can be.

ImageNet will remove 600,000 images of people stored on its database after an art project exposed racial bias in the program’s artificial intelligence system.

Created in 2009 by researchers at Princeton and Stanford, the online image database has been widely used by machine learning projects. The program has pulled more than 14 million images from across the web, which have been categorized by Amazon Mechanical Turk workers — a crowdsourcing platform through which people can earn money performing small tasks for third parties. According to the results of an online project by AI researcher Kate Crawford and artist Trevor Paglen, prejudices in that labor pool appear to have biased the machine learning data.

Training Humans — an exhibition that opened last week at the Prada Foundation in Milan — unveiled the duo’s findings to the public, but part of their experiment also lives online at ImageNet Roulette, a website where users can upload their own photographs to see how the database might categorize them. (Crawford and Paglen have also released “Excavating AI,” an article explaining their research.) The application will remain open until September 27, when its creators will take it offline; in the meantime, ImageNet Roulette has gone viral on social media because of its spurious, and often cringeworthy, results.

For example, the program defined one white woman as a “stunner, looker, mantrap,” and “dish,” describing her as “a very attractive or seductive looking woman.” Many people of color have noted an obvious racist bias to their results. Jamal Jordan, a journalist at the New York Times, explained on Twitter that each of his uploaded photographs returned tags like “Black, Black African, Negroid, or Negro.” And when one user uploaded an image of the Democratic presidential candidates Andrew Yang and Joe Biden, Yang who is Asian American, was tagged as “Buddhist” (he is not) while Biden was simply labeled as “grinner.”

In recent months, researchers have explored how biases against women and people of color manifest in facial recognition services offered by companies like Amazon, Microsoft, and IBM. Critics worry that this technology, which is increasingly sold to state and federal law enforcement agencies, might encourage police overreach as a crime-fighting tool. There are also concerned that such tools might unconstitutionally violate a person’s right to privacy under the Fourth Amendment.

“This exhibition shows how these images are part of a long tradition of capturing people’s images without their consent, in order to classify, segment, and often stereotype them in ways that evokes colonial projects of the past,” Paglen told the Art Newspaper.

For the artist, ImageNet’s problems are inherent to any kind of classification system. If AI learns from humans, the rationale goes, then it will inherent all the same biases that humans have. Training Humans simply exposes how technology’s air of objectivity is more façade than reality.